This is the multi-page printable view of this section. Click here to print.

Guides

- 1: Advanced Networking

- 2: Air-gapped Environments

- 3: Configuring Certificate Authorities

- 4: Configuring Containerd

- 5: Configuring Corporate Proxies

- 6: Configuring Network Connectivity

- 7: Configuring Pull Through Cache

- 8: Configuring the Cluster Endpoint

- 9: Configuring Wireguard Network

- 10: Customizing the Kernel

- 11: Customizing the Root Filesystem

- 12: Deploying Metrics Server

- 13: Disaster Recovery

- 14: Discovery

- 15: Disk Encryption

- 16: Editing Machine Configuration

- 17: KubeSpan

- 18: Managing PKI

- 19: Resetting a Machine

- 20: Role-based access control (RBAC)

- 21: Storage

- 22: Troubleshooting Control Plane

- 23: Upgrading Kubernetes

- 24: Upgrading Talos

- 25: Virtual (shared) IP

1 - Advanced Networking

Static Addressing

Static addressing is comprised of specifying addresses, routes ( remember to add your default gateway ), and interface.

Most likely you’ll also want to define the nameservers so you have properly functioning DNS.

machine:

network:

hostname: talos

nameservers:

- 10.0.0.1

interfaces:

- interface: eth0

addresses:

- 10.0.0.201/8

mtu: 8765

routes:

- network: 0.0.0.0/0

gateway: 10.0.0.1

- interface: eth1

ignore: true

time:

servers:

- time.cloudflare.com

Additional Addresses for an Interface

In some environments you may need to set additional addresses on an interface. In the following example, we set two additional addresses on the loopback interface.

machine:

network:

interfaces:

- interface: lo

addresses:

- 192.168.0.21/24

- 10.2.2.2/24

Bonding

The following example shows how to create a bonded interface.

machine:

network:

interfaces:

- interface: bond0

dhcp: true

bond:

mode: 802.3ad

lacpRate: fast

xmitHashPolicy: layer3+4

miimon: 100

updelay: 200

downdelay: 200

interfaces:

- eth0

- eth1

VLANs

To setup vlans on a specific device use an array of VLANs to add. The master device may be configured without addressing by setting dhcp to false.

machine:

network:

interfaces:

- interface: eth0

dhcp: false

vlans:

- vlanId: 100

addresses:

- "192.168.2.10/28"

routes:

- network: 0.0.0.0/0

gateway: 192.168.2.1

2 - Air-gapped Environments

In this guide we will create a Talos cluster running in an air-gapped environment with all the required images being pulled from an internal registry.

We will use the QEMU provisioner available in talosctl to create a local cluster, but the same approach could be used to deploy Talos in bigger air-gapped networks.

Requirements

The follow are requirements for this guide:

- Docker 18.03 or greater

- Requirements for the Talos QEMU cluster

Identifying Images

In air-gapped environments, access to the public Internet is restricted, so Talos can’t pull images from public Docker registries (docker.io, ghcr.io, etc.)

We need to identify the images required to install and run Talos.

The same strategy can be used for images required by custom workloads running on the cluster.

The talosctl images command provides a list of default images used by the Talos cluster (with default configuration

settings).

To print the list of images, run:

talosctl images

This list contains images required by a default deployment of Talos. There might be additional images required for the workloads running on this cluster, and those should be added to this list.

Preparing the Internal Registry

As access to the public registries is restricted, we have to run an internal Docker registry. In this guide, we will launch the registry on the same machine using Docker:

$ docker run -d -p 6000:5000 --restart always --name registry-aigrapped registry:2

1bf09802bee1476bc463d972c686f90a64640d87dacce1ac8485585de69c91a5

This registry will be accepting connections on port 6000 on the host IPs. The registry is empty by default, so we have fill it with the images required by Talos.

First, we pull all the images to our local Docker daemon:

$ for image in `talosctl images`; do docker pull $image; done

v0.12.0-amd64: Pulling from coreos/flannel

Digest: sha256:6d451d92c921f14bfb38196aacb6e506d4593c5b3c9d40a8b8a2506010dc3e10

...

All images are now stored in the Docker daemon store:

$ docker images

ghcr.io/talos-systems/install-cni v0.3.0-12-g90722c3 980d36ee2ee1 5 days ago 79.7MB

k8s.gcr.io/kube-proxy-amd64 v1.20.0 33c60812eab8 2 weeks ago 118MB

...

Now we need to re-tag them so that we can push them to our local registry.

We are going to replace the first component of the image name (before the first slash) with our registry endpoint 127.0.0.1:6000:

$ for image in `talosctl images`; do \

docker tag $image `echo $image | sed -E 's#^[^/]+/#127.0.0.1:6000/#'` \

done

As the next step, we push images to the internal registry:

$ for image in `talosctl images`; do \

docker push `echo $image | sed -E 's#^[^/]+/#127.0.0.1:6000/#'` \

done

We can now verify that the images are pushed to the registry:

$ curl http://127.0.0.1:6000/v2/_catalog

{"repositories":["autonomy/kubelet","coredns","coreos/flannel","etcd-development/etcd","kube-apiserver-amd64","kube-controller-manager-amd64","kube-proxy-amd64","kube-scheduler-amd64","talos-systems/install-cni","talos-systems/installer"]}

Note: images in the registry don’t have the registry endpoint prefix anymore.

Launching Talos in an Air-gapped Environment

For Talos to use the internal registry, we use the registry mirror feature to redirect all the image pull requests to the internal registry. This means that the registry endpoint (as the first component of the image reference) gets ignored, and all pull requests are sent directly to the specified endpoint.

We are going to use a QEMU-based Talos cluster for this guide, but the same approach works with Docker-based clusters as well. As QEMU-based clusters go through the Talos install process, they can be used better to model a real air-gapped environment.

The talosctl cluster create command provides conveniences for common configuration options.

The only required flag for this guide is --registry-mirror '*'=http://10.5.0.1:6000 which redirects every pull request to the internal registry.

The endpoint being used is 10.5.0.1, as this is the default bridge interface address which will be routable from the QEMU VMs (127.0.0.1 IP will be pointing to the VM itself).

$ sudo -E talosctl cluster create --provisioner=qemu --registry-mirror '*'=http://10.5.0.1:6000 --install-image=ghcr.io/talos-systems/installer:v0.13.0

validating CIDR and reserving IPs

generating PKI and tokens

creating state directory in "/home/smira/.talos/clusters/talos-default"

creating network talos-default

creating load balancer

creating dhcpd

creating master nodes

creating worker nodes

waiting for API

...

Note:

--install-imageshould match the image which was copied into the internal registry in the previous step.

You can be verify that the cluster is air-gapped by inspecting the registry logs: docker logs -f registry-airgapped.

Closing Notes

Running in an air-gapped environment might require additional configuration changes, for example using custom settings for DNS and NTP servers.

When scaling this guide to the bare-metal environment, following Talos config snippet could be used as an equivalent of the --registry-mirror flag above:

machine:

...

registries:

mirrors:

'*':

endpoints:

- http://10.5.0.1:6000/

...

Other implementations of Docker registry can be used in place of the Docker registry image used above to run the registry.

If required, auth can be configured for the internal registry (and custom TLS certificates if needed).

3 - Configuring Certificate Authorities

Appending the Certificate Authority

Put into each machine the PEM encoded certificate:

machine:

...

files:

- content: |

-----BEGIN CERTIFICATE-----

...

-----END CERTIFICATE-----

permissions: 0644

path: /etc/ssl/certs/ca-certificates

op: append

4 - Configuring Containerd

The base containerd configuration expects to merge in any additional configs present in /var/cri/conf.d/*.toml.

An example of exposing metrics

Into each machine config, add the following:

machine:

...

files:

- content: |

[metrics]

address = "0.0.0.0:11234"

path: /var/cri/conf.d/metrics.toml

op: create

Create cluster like normal and see that metrics are now present on this port:

$ curl 127.0.0.1:11234/v1/metrics

# HELP container_blkio_io_service_bytes_recursive_bytes The blkio io service bytes recursive

# TYPE container_blkio_io_service_bytes_recursive_bytes gauge

container_blkio_io_service_bytes_recursive_bytes{container_id="0677d73196f5f4be1d408aab1c4125cf9e6c458a4bea39e590ac779709ffbe14",device="/dev/dm-0",major="253",minor="0",namespace="k8s.io",op="Async"} 0

container_blkio_io_service_bytes_recursive_bytes{container_id="0677d73196f5f4be1d408aab1c4125cf9e6c458a4bea39e590ac779709ffbe14",device="/dev/dm-0",major="253",minor="0",namespace="k8s.io",op="Discard"} 0

...

...

5 - Configuring Corporate Proxies

Appending the Certificate Authority of MITM Proxies

Put into each machine the PEM encoded certificate:

machine:

...

files:

- content: |

-----BEGIN CERTIFICATE-----

...

-----END CERTIFICATE-----

permissions: 0644

path: /etc/ssl/certs/ca-certificates

op: append

Configuring a Machine to Use the Proxy

To make use of a proxy:

machine:

env:

http_proxy: <http proxy>

https_proxy: <https proxy>

no_proxy: <no proxy>

Additionally, configure the DNS nameservers, and NTP servers:

machine:

env:

...

time:

servers:

- <server 1>

- <server ...>

- <server n>

...

network:

nameservers:

- <ip 1>

- <ip ...>

- <ip n>

6 - Configuring Network Connectivity

Configuring Network Connectivity

The simplest way to deploy Talos is by ensuring that all the remote components of the system (talosctl, the control plane nodes, and worker nodes) all have layer 2 connectivity.

This is not always possible, however, so this page lays out the minimal network access that is required to configure and operate a talos cluster.

Note: These are the ports required for Talos specifically, and should be configured in addition to the ports required by kuberenetes. See the kubernetes docs for information on the ports used by kubernetes itself.

Control plane node(s)

| Protocol | Direction | Port Range | Purpose | Used By |

|---|---|---|---|---|

| TCP | Inbound | 50000* | apid | talosctl |

| TCP | Inbound | 50001* | trustd | Control plane nodes, worker nodes |

Ports marked with a

*are not currently configurable, but that may change in the future. Follow along here.

Worker node(s)

| Protocol | Direction | Port Range | Purpose | Used By |

|---|---|---|---|---|

| TCP | Inbound | 50001* | trustd | Control plane nodes |

Ports marked with a

*are not currently configurable, but that may change in the future. Follow along here.

7 - Configuring Pull Through Cache

In this guide we will create a set of local caching Docker registry proxies to minimize local cluster startup time.

When running Talos locally, pulling images from Docker registries might take a significant amount of time. We spin up local caching pass-through registries to cache images and configure a local Talos cluster to use those proxies. A similar approach might be used to run Talos in production in air-gapped environments. It can be also used to verify that all the images are available in local registries.

Video Walkthrough

To see a live demo of this writeup, see the video below:

Requirements

The follow are requirements for creating the set of caching proxies:

Launch the Caching Docker Registry Proxies

Talos pulls from docker.io, k8s.gcr.io, quay.io, gcr.io, and ghcr.io by default.

If your configuration is different, you might need to modify the commands below:

docker run -d -p 5000:5000 \

-e REGISTRY_PROXY_REMOTEURL=https://registry-1.docker.io \

--restart always \

--name registry-docker.io registry:2

docker run -d -p 5001:5000 \

-e REGISTRY_PROXY_REMOTEURL=https://k8s.gcr.io \

--restart always \

--name registry-k8s.gcr.io registry:2

docker run -d -p 5002:5000 \

-e REGISTRY_PROXY_REMOTEURL=https://quay.io \

--restart always \

--name registry-quay.io registry:2.5

docker run -d -p 5003:5000 \

-e REGISTRY_PROXY_REMOTEURL=https://gcr.io \

--restart always \

--name registry-gcr.io registry:2

docker run -d -p 5004:5000 \

-e REGISTRY_PROXY_REMOTEURL=https://ghcr.io \

--restart always \

--name registry-ghcr.io registry:2

Note: Proxies are started as docker containers, and they’re automatically configured to start with Docker daemon. Please note that

quay.ioproxy doesn’t support recent Docker image schema, so we run older registry image version (2.5).

As a registry container can only handle a single upstream Docker registry, we launch a container per upstream, each on its own host port (5000, 5001, 5002, 5003 and 5004).

Using Caching Registries with QEMU Local Cluster

With a QEMU local cluster, a bridge interface is created on the host. As registry containers expose their ports on the host, we can use bridge IP to direct proxy requests.

sudo talosctl cluster create --provisioner qemu \

--registry-mirror docker.io=http://10.5.0.1:5000 \

--registry-mirror k8s.gcr.io=http://10.5.0.1:5001 \

--registry-mirror quay.io=http://10.5.0.1:5002 \

--registry-mirror gcr.io=http://10.5.0.1:5003 \

--registry-mirror ghcr.io=http://10.5.0.1:5004

The Talos local cluster should now start pulling via caching registries.

This can be verified via registry logs, e.g. docker logs -f registry-docker.io.

The first time cluster boots, images are pulled and cached, so next cluster boot should be much faster.

Note:

10.5.0.1is a bridge IP with default network (10.5.0.0/24), if using custom--cidr, value should be adjusted accordingly.

Using Caching Registries with docker Local Cluster

With a docker local cluster we can use docker bridge IP, default value for that IP is 172.17.0.1.

On Linux, the docker bridge address can be inspected with ip addr show docker0.

talosctl cluster create --provisioner docker \

--registry-mirror docker.io=http://172.17.0.1:5000 \

--registry-mirror k8s.gcr.io=http://172.17.0.1:5001 \

--registry-mirror quay.io=http://172.17.0.1:5002 \

--registry-mirror gcr.io=http://172.17.0.1:5003 \

--registry-mirror ghcr.io=http://172.17.0.1:5004

Cleaning Up

To cleanup, run:

docker rm -f registry-docker.io

docker rm -f registry-k8s.gcr.io

docker rm -f registry-quay.io

docker rm -f registry-gcr.io

docker rm -f registry-ghcr.io

Note: Removing docker registry containers also removes the image cache. So if you plan to use caching registries, keep the containers running.

8 - Configuring the Cluster Endpoint

In this section, we will step through the configuration of a Talos based Kubernetes cluster. There are three major components we will configure:

apidandtalosctl- the master nodes

- the worker nodes

Talos enforces a high level of security by using mutual TLS for authentication and authorization.

We recommend that the configuration of Talos be performed by a cluster owner. A cluster owner should be a person of authority within an organization, perhaps a director, manager, or senior member of a team. They are responsible for storing the root CA, and distributing the PKI for authorized cluster administrators.

Recommended settings

Talos runs great out of the box, but if you tweak some minor settings it will make your life a lot easier in the future. This is not a requirement, but rather a document to explain some key settings.

Endpoint

To configure the talosctl endpoint, it is recommended you use a resolvable DNS name.

This way, if you decide to upgrade to a multi-controlplane cluster you only have to add the ip adres to the hostname configuration.

The configuration can either be done on a Loadbalancer, or simply trough DNS.

For example:

This is in the config file for the cluster e.g. controlplane.yaml and worker.yaml. for more details, please see: v1alpha1 endpoint configuration

.....

cluster:

controlPlane:

endpoint: https://endpoint.example.local:6443

.....

If you have a DNS name as the endpoint, you can upgrade your talos cluster with multiple controlplanes in the future (if you don’t have a multi-controlplane setup from the start) Using a DNS name generates the corresponding Certificates (Kubernetes and Talos) for the correct hostname.

9 - Configuring Wireguard Network

Configuring Wireguard Network

Quick Start

The quickest way to try out Wireguard is to use talosctl cluster create command:

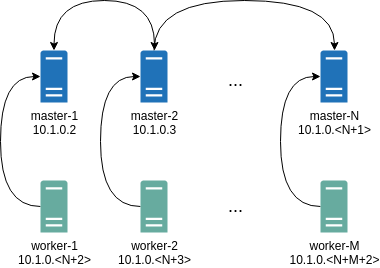

talosctl cluster create --wireguard-cidr 10.1.0.0/24

It will automatically generate Wireguard network configuration for each node with the following network topology:

Where all controlplane nodes will be used as Wireguard servers which listen on port 51111.

All controlplanes and workers will connect to all controlplanes.

It also sets PersistentKeepalive to 5 seconds to establish controlplanes to workers connection.

After the cluster is deployed it should be possible to verify Wireguard network connectivity.

It is possible to deploy a container with hostNetwork enabled, then do kubectl exec <container> /bin/bash and either do:

ping 10.1.0.2

Or install wireguard-tools package and run:

wg show

Wireguard show should output something like this:

interface: wg0

public key: OMhgEvNIaEN7zeCLijRh4c+0Hwh3erjknzdyvVlrkGM=

private key: (hidden)

listening port: 47946

peer: 1EsxUygZo8/URWs18tqB5FW2cLVlaTA+lUisKIf8nh4=

endpoint: 10.5.0.2:51111

allowed ips: 10.1.0.0/24

latest handshake: 1 minute, 55 seconds ago

transfer: 3.17 KiB received, 3.55 KiB sent

persistent keepalive: every 5 seconds

It is also possible to use generated configuration as a reference by pulling generated config files using:

talosctl read -n 10.5.0.2 /system/state/config.yaml > controlplane.yaml

talosctl read -n 10.5.0.3 /system/state/config.yaml > worker.yaml

Manual Configuration

All Wireguard configuration can be done by changing Talos machine config files. As an example we will use this official Wireguard quick start tutorial.

Key Generation

This part is exactly the same:

wg genkey | tee privatekey | wg pubkey > publickey

Setting up Device

Inline comments show relations between configs and wg quickstart tutorial commands:

...

network:

interfaces:

...

# ip link add dev wg0 type wireguard

- interface: wg0

mtu: 1500

# ip address add dev wg0 192.168.2.1/24

addresses:

- 192.168.2.1/24

# wg set wg0 listen-port 51820 private-key /path/to/private-key peer ABCDEF... allowed-ips 192.168.88.0/24 endpoint 209.202.254.14:8172

wireguard:

privateKey: <privatekey file contents>

listenPort: 51820

peers:

allowedIPs:

- 192.168.88.0/24

endpoint: 209.202.254.14.8172

publicKey: ABCDEF...

...

When networkd gets this configuration it will create the device, configure it and will bring it up (equivalent to ip link set up dev wg0).

All supported config parameters are described in the Machine Config Reference.

10 - Customizing the Kernel

The installer image contains ONBUILD instructions that handle the following:

- the decompression, and unpacking of the

initramfs.xz - the unsquashing of the rootfs

- the copying of new rootfs files

- the squashing of the new rootfs

- and the packing, and compression of the new

initramfs.xz

When used as a base image, the installer will perform the above steps automatically with the requirement that a customization stage be defined in the Dockerfile.

Build and push your own kernel:

git clone https://github.com/talos-systems/pkgs.git

cd pkgs

make kernel-menuconfig USERNAME=_your_github_user_name_

docker login ghcr.io --username _your_github_user_name_

make kernel USERNAME=_your_github_user_name_ PUSH=true

Using a multi-stage Dockerfile we can define the customization stage and build FROM the installer image:

FROM scratch AS customization

COPY --from=<custom kernel image> /lib/modules /lib/modules

FROM ghcr.io/talos-systems/installer:latest

COPY --from=<custom kernel image> /boot/vmlinuz /usr/install/${TARGETARCH}/vmlinuz

When building the image, the customization stage will automatically be copied into the rootfs.

The customization stage is not limited to a single COPY instruction.

In fact, you can do whatever you would like in this stage, but keep in mind that everything in / will be copied into the rootfs.

To build the image, run:

DOCKER_BUILDKIT=0 docker build --build-arg RM="/lib/modules" -t installer:kernel .

Note: buildkit has a bug #816, to disable it use

DOCKER_BUILDKIT=0

Now that we have a custom installer we can build Talos for the specific platform we wish to deploy to.

11 - Customizing the Root Filesystem

The installer image contains ONBUILD instructions that handle the following:

- the decompression, and unpacking of the

initramfs.xz - the unsquashing of the rootfs

- the copying of new rootfs files

- the squashing of the new rootfs

- and the packing, and compression of the new

initramfs.xz

When used as a base image, the installer will perform the above steps automatically with the requirement that a customization stage be defined in the Dockerfile.

For example, say we have an image that contains the contents of a library we wish to add to the Talos rootfs.

We need to define a stage with the name customization:

FROM scratch AS customization

COPY --from=<name|index> <src> <dest>

Using a multi-stage Dockerfile we can define the customization stage and build FROM the installer image:

FROM scratch AS customization

COPY --from=<name|index> <src> <dest>

FROM ghcr.io/talos-systems/installer:latest

When building the image, the customization stage will automatically be copied into the rootfs.

The customization stage is not limited to a single COPY instruction.

In fact, you can do whatever you would like in this stage, but keep in mind that everything in / will be copied into the rootfs.

Note:

<dest>is the path relative to the rootfs that you wish to place the contents of<src>.

To build the image, run:

docker build --squash -t <organization>/installer:latest .

In the case that you need to perform some cleanup before adding additional files to the rootfs, you can specify the RM build-time variable:

docker build --squash --build-arg RM="[<path> ...]" -t <organization>/installer:latest .

This will perform a rm -rf on the specified paths relative to the rootfs.

Note:

RMmust be a whitespace delimited list.

The resulting image can be used to:

- generate an image for any of the supported providers

- perform bare-metall installs

- perform upgrades

We will step through common customizations in the remainder of this section.

12 - Deploying Metrics Server

Metrics Server enables use of the Horizontal Pod Autoscaler and Vertical Pod Autoscaler. It does this by gathering metrics data from the kubelets in a cluster. By default, the certificates in use by the kubelets will not be recognized by metrics-server. This can be solved by either configuring metrics-server to do no validation of the TLS certificates, or by modifying the kubelet configuration to rotate its certificates and use ones that will be recognized by metrics-server.

Node Configuration

To enable kubelet certificate rotation, all nodes should have the following Machine Config snippet:

machine:

kubelet:

extraArgs:

rotate-server-certificates: true

Install During Bootstrap

We will want to ensure that new certificates for the kubelets are approved automatically. This can easily be done with the Kubelet Serving Certificate Approver, which will automatically approve the Certificate Signing Requests generated by the kubelets.

We can have Kubelet Serving Certificate Approver and metrics-server installed on the cluster automatically during bootstrap by adding the following snippet to the Cluster Config of the node that will be handling the bootstrap process:

cluster:

extraManifests:

- https://raw.githubusercontent.com/alex1989hu/kubelet-serving-cert-approver/main/deploy/standalone-install.yaml

- https://github.com/kubernetes-sigs/metrics-server/releases/latest/download/components.yaml

Install After Bootstrap

If you choose not to use extraManifests to install Kubelet Serving Certificate Approver and metrics-server during bootstrap, you can install them once the cluster is online using kubectl:

kubectl apply -f https://raw.githubusercontent.com/alex1989hu/kubelet-serving-cert-approver/main/deploy/standalone-install.yaml

kubectl apply -f https://github.com/kubernetes-sigs/metrics-server/releases/latest/download/components.yaml

13 - Disaster Recovery

etcd database backs Kubernetes control plane state, so if the etcd service is unavailable

Kubernetes control plane goes down, and the cluster is not recoverable until etcd is recovered with contents.

The etcd consistency model builds around the consensus protocol Raft, so for highly-available control plane clusters,

loss of one control plane node doesn’t impact cluster health.

In general, etcd stays up as long as a sufficient number of nodes to maintain quorum are up.

For a three control plane node Talos cluster, this means that the cluster tolerates a failure of any single node,

but losing more than one node at the same time leads to complete loss of service.

Because of that, it is important to take routine backups of etcd state to have a snapshot to recover cluster from

in case of catastrophic failure.

Backup

Snapshotting etcd Database

Create a consistent snapshot of etcd database with talosctl etcd snapshot command:

$ talosctl -n <IP> etcd snapshot db.snapshot

etcd snapshot saved to "db.snapshot" (2015264 bytes)

snapshot info: hash c25fd181, revision 4193, total keys 1287, total size 3035136

Note: filename

db.snapshotis arbitrary.

This database snapshot can be taken on any healthy control plane node (with IP address <IP> in the example above),

as all etcd instances contain exactly same data.

It is recommended to configure etcd snapshots to be created on some schedule to allow point-in-time recovery using the latest snapshot.

Disaster Database Snapshot

If etcd cluster is not healthy, the talosctl etcd snapshot command might fail.

In that case, copy the database snapshot directly from the control plane node:

talosctl -n <IP> cp /var/lib/etcd/member/snap/db .

This snapshot might not be fully consistent (if the etcd process is running), but it allows

for disaster recovery when latest regular snapshot is not available.

Machine Configuration

Machine configuration might be required to recover the node after hardware failure. Backup Talos node machine configuration with the command:

talosctl -n IP get mc v1alpha1 -o yaml | yq eval '.spec' -

Recovery

Before starting a disaster recovery procedure, make sure that etcd cluster can’t be recovered:

- get

etcdcluster member list on all healthy control plane nodes withtalosctl -n IP etcd memberscommand and compare across all members. - query

etcdhealth across control plane nodes withtalosctl -n IP service etcd.

If the quorum can be restored, restoring quorum might be a better strategy than performing full disaster recovery procedure.

Latest Etcd Snapshot

Get hold of the latest etcd database snapshot.

If a snapshot is not fresh enough, create a database snapshot (see above), even if the etcd cluster is unhealthy.

Init Node

Make sure that there are no control plane nodes with machine type init:

$ talosctl -n <IP1>,<IP2>,... get machinetype

NODE NAMESPACE TYPE ID VERSION TYPE

172.20.0.2 config MachineType machine-type 2 controlplane

172.20.0.4 config MachineType machine-type 2 controlplane

172.20.0.3 config MachineType machine-type 2 controlplane

Nodes with init type are incompatible with etcd recovery procedure.

init node can be converted to controlplane type with talosctl edit mc --on-reboot command followed

by node reboot with talosctl reboot command.

Preparing Control Plane Nodes

If some control plane nodes experienced hardware failure, replace them with new nodes. Use machine configuration backup to re-create the nodes with the same secret material and control plane settings to allow workers to join the recovered control plane.

If a control plane node is healthy but etcd isn’t, wipe the node’s EPHEMERAL partition to remove the etcd

data directory (make sure a database snapshot is taken before doing this):

talosctl -n <IP> reset --graceful=false --reboot --system-labels-to-wipe=EPHEMERAL

At this point, all control plane nodes should boot up, and etcd service should be in the Preparing state.

Kubernetes control plane endpoint should be pointed to the new control plane nodes if there were any changes to the node addresses.

Recovering from the Backup

Make sure all etcd service instances are in Preparing state:

$ talosctl -n <IP> service etcd

NODE 172.20.0.2

ID etcd

STATE Preparing

HEALTH ?

EVENTS [Preparing]: Running pre state (17s ago)

[Waiting]: Waiting for service "cri" to be "up", time sync (18s ago)

[Waiting]: Waiting for service "cri" to be "up", service "networkd" to be "up", time sync (20s ago)

Execute the bootstrap command against any control plane node passing the path to the etcd database snapshot:

$ talosctl -n <IP> bootstrap --recover-from=./db.snapshot

recovering from snapshot "./db.snapshot": hash c25fd181, revision 4193, total keys 1287, total size 3035136

Note: if database snapshot was copied out directly from the

etcddata directory usingtalosctl cp, add flag--recover-skip-hash-checkto skip integrity check on restore.

Talos node should print matching information in the kernel log:

recovering etcd from snapshot: hash c25fd181, revision 4193, total keys 1287, total size 3035136

{"level":"info","msg":"restoring snapshot","path":"/var/lib/etcd.snapshot","wal-dir":"/var/lib/etcd/member/wal","data-dir":"/var/lib/etcd","snap-dir":"/var/li}

{"level":"info","msg":"restored last compact revision","meta-bucket-name":"meta","meta-bucket-name-key":"finishedCompactRev","restored-compact-revision":3360}

{"level":"info","msg":"added member","cluster-id":"a3390e43eb5274e2","local-member-id":"0","added-peer-id":"eb4f6f534361855e","added-peer-peer-urls":["https:/}

{"level":"info","msg":"restored snapshot","path":"/var/lib/etcd.snapshot","wal-dir":"/var/lib/etcd/member/wal","data-dir":"/var/lib/etcd","snap-dir":"/var/lib/etcd/member/snap"}

Now etcd service should become healthy on the bootstrap node, Kubernetes control plane components

should start and control plane endpoint should become available.

Remaining control plane nodes join etcd cluster once control plane endpoint is up.

Single Control Plane Node Cluster

This guide applies to the single control plane clusters as well.

In fact, it is much more important to take regular snapshots of the etcd database in single control plane node

case, as loss of the control plane node might render the whole cluster irrecoverable without a backup.

14 - Discovery

Video Walkthrough

To see a live demo of Cluster Discovery, see the video below:

Registries

Peers are aggregated from a number of optional registries.

By default, Talos will use the kubernetes and service registries.

Either one can be disabled.

To disable a registry, set disabled to true (this option is the same for all registries):

For example, to disable the service registry:

cluster:

discovery:

enabled: true

registries:

service:

disabled: true

Disabling all registries effectively disables member discovery altogether.

As of v0.13, Talos supports the

kubernetesandserviceregistries.

Kubernetes registry uses Kubernetes Node resource data and additional Talos annotations:

$ kubectl describe node <nodename>

Annotations: cluster.talos.dev/node-id: Utoh3O0ZneV0kT2IUBrh7TgdouRcUW2yzaaMl4VXnCd

networking.talos.dev/assigned-prefixes: 10.244.0.0/32,10.244.0.1/24

networking.talos.dev/self-ips: 172.20.0.2,fd83:b1f7:fcb5:2802:8c13:71ff:feaf:7c94

...

Service registry uses external Discovery Service to exchange encrypted information about cluster members.

Resource Definitions

Talos v0.13 introduces seven new resources that can be used to introspect the new discovery and KubeSpan features.

Discovery

Identities

The node’s unique identity (base62 encoded random 32 bytes) can be obtained with:

Note: Using base62 allows the ID to be URL encoded without having to use the ambiguous URL-encoding version of base64.

$ talosctl get identities -o yaml

...

spec:

nodeId: Utoh3O0ZneV0kT2IUBrh7TgdouRcUW2yzaaMl4VXnCd

Node identity is used as the unique Affiliate identifier.

Node identity resource is preserved in the STATE partition in node-identity.yaml file.

Node identity is preserved across reboots and upgrades, but it is regenerated if the node is reset (wiped).

Affiliates

An affiliate is a proposed member attributed to the fact that the node has the same cluster ID and secret.

$ talosctl get affiliates

ID VERSION HOSTNAME MACHINE TYPE ADDRESSES

2VfX3nu67ZtZPl57IdJrU87BMjVWkSBJiL9ulP9TCnF 2 talos-default-master-2 controlplane ["172.20.0.3","fd83:b1f7:fcb5:2802:986b:7eff:fec5:889d"]

6EVq8RHIne03LeZiJ60WsJcoQOtttw1ejvTS6SOBzhUA 2 talos-default-worker-1 worker ["172.20.0.5","fd83:b1f7:fcb5:2802:cc80:3dff:fece:d89d"]

NVtfu1bT1QjhNq5xJFUZl8f8I8LOCnnpGrZfPpdN9WlB 2 talos-default-worker-2 worker ["172.20.0.6","fd83:b1f7:fcb5:2802:2805:fbff:fe80:5ed2"]

Utoh3O0ZneV0kT2IUBrh7TgdouRcUW2yzaaMl4VXnCd 4 talos-default-master-1 controlplane ["172.20.0.2","fd83:b1f7:fcb5:2802:8c13:71ff:feaf:7c94"]

b3DebkPaCRLTLLWaeRF1ejGaR0lK3m79jRJcPn0mfA6C 2 talos-default-master-3 controlplane ["172.20.0.4","fd83:b1f7:fcb5:2802:248f:1fff:fe5c:c3f"]

One of the Affiliates with the ID matching node identity is populated from the node data, other Affiliates are pulled from the registries.

Enabled discovery registries run in parallel and discovered data is merged to build the list presented above.

Details about data coming from each registry can be queried from the cluster-raw namespace:

$ talosctl get affiliates --namespace=cluster-raw

ID VERSION HOSTNAME MACHINE TYPE ADDRESSES

k8s/2VfX3nu67ZtZPl57IdJrU87BMjVWkSBJiL9ulP9TCnF 3 talos-default-master-2 controlplane ["172.20.0.3","fd83:b1f7:fcb5:2802:986b:7eff:fec5:889d"]

k8s/6EVq8RHIne03LeZiJ60WsJcoQOtttw1ejvTS6SOBzhUA 2 talos-default-worker-1 worker ["172.20.0.5","fd83:b1f7:fcb5:2802:cc80:3dff:fece:d89d"]

k8s/NVtfu1bT1QjhNq5xJFUZl8f8I8LOCnnpGrZfPpdN9WlB 2 talos-default-worker-2 worker ["172.20.0.6","fd83:b1f7:fcb5:2802:2805:fbff:fe80:5ed2"]

k8s/b3DebkPaCRLTLLWaeRF1ejGaR0lK3m79jRJcPn0mfA6C 3 talos-default-master-3 controlplane ["172.20.0.4","fd83:b1f7:fcb5:2802:248f:1fff:fe5c:c3f"]

service/2VfX3nu67ZtZPl57IdJrU87BMjVWkSBJiL9ulP9TCnF 23 talos-default-master-2 controlplane ["172.20.0.3","fd83:b1f7:fcb5:2802:986b:7eff:fec5:889d"]

service/6EVq8RHIne03LeZiJ60WsJcoQOtttw1ejvTS6SOBzhUA 26 talos-default-worker-1 worker ["172.20.0.5","fd83:b1f7:fcb5:2802:cc80:3dff:fece:d89d"]

service/NVtfu1bT1QjhNq5xJFUZl8f8I8LOCnnpGrZfPpdN9WlB 20 talos-default-worker-2 worker ["172.20.0.6","fd83:b1f7:fcb5:2802:2805:fbff:fe80:5ed2"]

service/b3DebkPaCRLTLLWaeRF1ejGaR0lK3m79jRJcPn0mfA6C 14 talos-default-master-3 controlplane ["172.20.0.4","fd83:b1f7:fcb5:2802:248f:1fff:fe5c:c3f"]

Each Affiliate ID is prefixed with k8s/ for data coming from the Kubernetes registry and with service/ for data coming from the discovery service.

Members

A member is an affiliate that has been approved to join the cluster. The members of the cluster can be obtained with:

$ talosctl get members

ID VERSION HOSTNAME MACHINE TYPE OS ADDRESSES

talos-default-master-1 2 talos-default-master-1 controlplane Talos (v0.13.0) ["172.20.0.2","fd83:b1f7:fcb5:2802:8c13:71ff:feaf:7c94"]

talos-default-master-2 1 talos-default-master-2 controlplane Talos (v0.13.0) ["172.20.0.3","fd83:b1f7:fcb5:2802:986b:7eff:fec5:889d"]

talos-default-master-3 1 talos-default-master-3 controlplane Talos (v0.13.0) ["172.20.0.4","fd83:b1f7:fcb5:2802:248f:1fff:fe5c:c3f"]

talos-default-worker-1 1 talos-default-worker-1 worker Talos (v0.13.0) ["172.20.0.5","fd83:b1f7:fcb5:2802:cc80:3dff:fece:d89d"]

talos-default-worker-2 1 talos-default-worker-2 worker Talos (v0.13.0) ["172.20.0.6","fd83:b1f7:fcb5:2802:2805:fbff:fe80:5ed2"]

15 - Disk Encryption

It is possible to enable encryption for system disks at the OS level.

As of this writing, only STATE and EPHEMERAL partitions can be encrypted.

STATE contains the most sensitive node data: secrets and certs.

EPHEMERAL partition may contain some sensitive workload data.

Data is encrypted using LUKS2, which is provided by the Linux kernel modules and cryptsetup utility.

The operating system will run additional setup steps when encryption is enabled.

If the disk encryption is enabled for the STATE partition, the system will:

- Save STATE encryption config as JSON in the META partition.

- Before mounting the STATE partition, load encryption configs either from the machine config or from the META partition. Note that the machine config is always preferred over the META one.

- Before mounting the STATE partition, format and encrypt it. This occurs only if the STATE partition is empty and has no filesystem.

If the disk encryption is enabled for the EPHEMERAL partition, the system will:

- Get the encryption config from the machine config.

- Before mounting the EPHEMERAL partition, encrypt and format it. This occurs only if the EPHEMERAL partition is empty and has no filesystem.

Configuration

Right now this encryption is disabled by default. To enable disk encryption you should modify the machine configuration with the following options:

machine:

...

systemDiskEncryption:

ephemeral:

keys:

- nodeID: {}

slot: 0

state:

keys:

- nodeID: {}

slot: 0

Encryption Keys

Note: What the LUKS2 docs call “keys” are, in reality, a passphrase. When this passphrase is added, LUKS2 runs argon2 to create an actual key from that passphrase.

LUKS2 supports up to 32 encryption keys and it is possible to specify all of them in the machine configuration. Talos always tries to sync the keys list defined in the machine config with the actual keys defined for the LUKS2 partition. So if you update the keys list you should have at least one key that is not changed to be used for keys management.

When you define a key you should specify the key kind and the slot:

machine:

...

state:

keys:

- nodeID: {} # key kind

slot: 1

ephemeral:

keys:

- static:

passphrase: supersecret

slot: 0

Take a note that key order does not play any role on which key slot is used. Every key must always have a slot defined.

Encryption Key Kinds

Talos supports two kinds of keys:

nodeIDwhich is generated using the node UUID and the partition label (note that if the node UUID is not really random it will fail the entropy check).staticwhich you define right in the configuration.

Note: Use static keys only if your STATE partition is encrypted and only for the EPHEMERAL partition. For the STATE partition it will be stored in the META partition, which is not encrypted.

Key Rotation

It is necessary to do talosctl apply-config a couple of times to rotate keys, since there is a need to always maintain a single working key while changing the other keys around it.

So, for example, first add a new key:

machine:

...

ephemeral:

keys:

- static:

passphrase: oldkey

slot: 0

- static:

passphrase: newkey

slot: 1

...

Run:

talosctl apply-config -n <node> -f config.yaml

Then remove the old key:

machine:

...

ephemeral:

keys:

- static:

passphrase: newkey

slot: 1

...

Run:

talosctl apply-config -n <node> -f config.yaml

Going from Unencrypted to Encrypted and Vice Versa

Ephemeral Partition

There is no in-place encryption support for the partitions right now, so to avoid losing any data only empty partitions can be encrypted.

As such, migration from unencrypted to encrypted needs some additional handling, especially around explicitly wiping partitions.

apply-configshould be called with--on-rebootflag.- Partition should be wiped after

apply-config, but before the reboot.

Edit your machine config and add the encryption configuration:

vim config.yaml

Apply the configuration with --on-reboot flag:

talosctl apply-config -f config.yaml -n <node ip> --on-reboot

Wipe the partition you’re going to encrypt:

talosctl reset --system-labels-to-wipe EPHEMERAL -n <node ip> --reboot=true

That’s it! After you run the last command, the partition will be wiped and the node will reboot. During the next boot the system will encrypt the partition.

State Partition

Calling wipe against the STATE partition will make the node lose the config, so the previous flow is not going to work.

The flow should be to first wipe the STATE partition:

talosctl reset --system-labels-to-wipe STATE -n <node ip> --reboot=true

Node will enter into maintenance mode, then run apply-config with --insecure flag:

talosctl apply-config --insecure -n <node ip> -f config.yaml

After installation is complete the node should encrypt the STATE partition.

16 - Editing Machine Configuration

Talos node state is fully defined by machine configuration. Initial configuration is delivered to the node at bootstrap time, but configuration can be updated while the node is running.

Note: Be sure that config is persisted so that configuration updates are not overwritten on reboots. Configuration persistence was enabled by default since Talos 0.5 (

persist: truein machine configuration).

There are three talosctl commands which facilitate machine configuration updates:

talosctl apply-configto apply configuration from the filetalosctl edit machineconfigto launch an editor with existing node configuration, make changes and apply configuration backtalosctl patch machineconfigto apply automated machine configuration via JSON patch

Each of these commands can operate in one of three modes:

- apply change with a reboot (default): update configuration, reboot Talos node to apply configuration change

- apply change immediately (

--immediateflag): change is applied immediately without a reboot, only.clustersub-tree of the machine configuration can be updated in Talos 0.9 - apply change on next reboot (

--on-reboot): change is staged to be applied after a reboot, but node is not rebooted

Note: applying change on next reboot (

--on-reboot) doesn’t modify current node configuration, so next call totalosctl edit machineconfig --on-rebootwill not see changes

talosctl apply-config

This command is mostly used to submit initial machine configuration to the node (generated by talosctl gen config).

It can be used to apply new configuration from the file to the running node as well, but most of the time it’s not convenient, as it doesn’t operate on the current node machine configuration.

Example:

talosctl -n <IP> apply-config -f config.yaml

Command apply-config can also be invoked as apply machineconfig:

talosctl -n <IP> apply machineconfig -f config.yaml

Applying machine configuration immediately (without a reboot):

talosctl -n IP apply machineconfig -f config.yaml --immediate

taloctl edit machineconfig

Command talosctl edit loads current machine configuration from the node and launches configured editor to modify the config.

If config hasn’t been changed in the editor (or if updated config is empty), update is not applied.

Note: Talos uses environment variables

TALOS_EDITOR,EDITORto pick up the editor preference. If environment variables are missing,vieditor is used by default.

Example:

talosctl -n <IP> edit machineconfig

Configuration can be edited for multiple nodes if multiple IP addresses are specified:

talosctl -n <IP1>,<IP2>,... edit machineconfig

Applying machine configuration change immediately (without a reboot):

talosctl -n <IP> edit machineconfig --immediate

talosctl patch machineconfig

Command talosctl patch works similar to talosctl edit command - it loads current machine configuration, but instead of launching configured editor it applies JSON patch to the configuration and writes result back to the node.

Example, updating kubelet version (with a reboot):

$ talosctl -n <IP> patch machineconfig -p '[{"op": "replace", "path": "/machine/kubelet/image", "value": "ghcr.io/talos-systems/kubelet:v1.20.5"}]'

patched mc at the node <IP>

Updating kube-apiserver version in immediate mode (without a reboot):

$ talosctl -n <IP> patch machineconfig --immediate -p '[{"op": "replace", "path": "/cluster/apiServer/image", "value": "k8s.gcr.io/kube-apiserver:v1.20.5"}]'

patched mc at the node <IP>

Patch might be applied to multiple nodes when multiple IPs are specified:

taloctl -n <IP1>,<IP2>,... patch machineconfig --immediate -p '[{...}]'

Recovering from Node Boot Failures

If a Talos node fails to boot because of wrong configuration (for example, control plane endpoint is incorrect), configuration can be updated to fix the issue.

If the boot sequence is still running, Talos might refuse applying config in default mode.

In that case --on-reboot mode can be used coupled with talosctl reboot command to trigger a reboot and apply configuration update.

17 - KubeSpan

KubeSpan is a feature of Talos that automates the setup and maintenance of a full mesh WireGuard network for your cluster, giving you the ablility to operate hybrid Kubernetes clusters that can span the edge, datacenter, and cloud. Management of keys and discovery of peers can be completely automated for a zero-touch experience that makes it simple and easy to create hybrid clusters.

Video Walkthrough

To learn more about KubeSpan, see the video below:

To see a live demo of KubeSpan, see one the videos below:

Enabling

Creating a New Cluster

To generate configuration files for a new cluster, we can use the --with-kubespan flag in talosctl gen config.

This will enable peer discovery and KubeSpan.

...

# Provides machine specific network configuration options.

network:

# Configures KubeSpan feature.

kubespan:

enabled: true # Enable the KubeSpan feature.

...

# Configures cluster member discovery.

discovery:

enabled: true # Enable the cluster membership discovery feature.

# Configure registries used for cluster member discovery.

registries:

# Kubernetes registry uses Kubernetes API server to discover cluster members and stores additional information

kubernetes: {}

# Service registry is using an external service to push and pull information about cluster members.

service: {}

...

# Provides cluster specific configuration options.

cluster:

id: yui150Ogam0pdQoNZS2lZR-ihi8EWxNM17bZPktJKKE= # Globally unique identifier for this cluster.

secret: dAmFcyNmDXusqnTSkPJrsgLJ38W8oEEXGZKM0x6Orpc= # Shared secret of cluster.

The default discovery service is an external service hosted for free by Sidero Labs. The default value is

https://discovery.talos.dev/. Contact Sidero Labs if you need to run this service privately.

Upgrading an Existing Cluster

In order to enable KubeSpan for an existing cluster, upgrade to the latest v0.13. Once your cluster is upgraded, the configuration of each node must contain the globally unique identifier, the shared secret for the cluster, and have KubeSpan and discovery enabled.

Note: Discovery can be used without KubeSpan, but KubeSpan requires at least one discovery registry.

Talos v0.11 or Less

If you are migrating from Talos v0.11 or less, we need to generate a cluster ID and secret.

To generate an id:

$ openssl rand -base64 32

EUsCYz+oHNuBppS51P9aKSIOyYvIPmbZK944PWgiyMQ=

To generate a secret:

$ openssl rand -base64 32

AbdsWjY9i797kGglghKvtGdxCsdllX9CemLq+WGVeaw=

Now, update the configuration of each node with the cluster with the generated id and secret.

You should end up with the addition of something like this (your id and secret should be different):

cluster:

id: EUsCYz+oHNuBppS51P9aKSIOyYvIPmbZK944PWgiyMQ=

secret: AbdsWjY9i797kGglghKvtGdxCsdllX9CemLq+WGVeaw=

Note: This can be applied in immediate mode (no reboot required) by passing

--immediateto either theedit machineconfigorapply-configsubcommands.

Talos v0.12

Enable kubespan and discovery.

machine:

network:

kubespan:

enabled: true

cluster:

discovery:

enabled: true

Resource Definitions

KubeSpanIdentities

A node’s WireGuard identities can be obtained with:

$ talosctl get kubespanidentities -o yaml

...

spec:

address: fd83:b1f7:fcb5:2802:8c13:71ff:feaf:7c94/128

subnet: fd83:b1f7:fcb5:2802::/64

privateKey: gNoasoKOJzl+/B+uXhvsBVxv81OcVLrlcmQ5jQwZO08=

publicKey: NzW8oeIH5rJyY5lefD9WRoHWWRr/Q6DwsDjMX+xKjT4=

Talos automatically configures unique IPv6 address for each node in the cluster-specific IPv6 ULA prefix.

Wireguard private key is generated for the node, private key never leaves the node while public key is published through the cluster discovery.

KubeSpanIdentity is persisted across reboots and upgrades in STATE partition in the file kubespan-identity.yaml.

KubeSpanPeerSpecs

A node’s WireGuard peers can be obtained with:

$ talosctl get kubespanpeerspecs

ID VERSION LABEL ENDPOINTS

06D9QQOydzKrOL7oeLiqHy9OWE8KtmJzZII2A5/FLFI= 2 talos-default-master-2 ["172.20.0.3:51820"]

THtfKtfNnzJs1nMQKs5IXqK0DFXmM//0WMY+NnaZrhU= 2 talos-default-master-3 ["172.20.0.4:51820"]

nVHu7l13uZyk0AaI1WuzL2/48iG8af4WRv+LWmAax1M= 2 talos-default-worker-2 ["172.20.0.6:51820"]

zXP0QeqRo+CBgDH1uOBiQ8tA+AKEQP9hWkqmkE/oDlc= 2 talos-default-worker-1 ["172.20.0.5:51820"]

The peer ID is the Wireguard public key.

KubeSpanPeerSpecs are built from the cluster discovery data.

KubeSpanPeerStatuses

The status of a node’s WireGuard peers can be obtained with:

$ talosctl get kubespanpeerstatuses

ID VERSION LABEL ENDPOINT STATE RX TX

06D9QQOydzKrOL7oeLiqHy9OWE8KtmJzZII2A5/FLFI= 63 talos-default-master-2 172.20.0.3:51820 up 15043220 17869488

THtfKtfNnzJs1nMQKs5IXqK0DFXmM//0WMY+NnaZrhU= 62 talos-default-master-3 172.20.0.4:51820 up 14573208 18157680

nVHu7l13uZyk0AaI1WuzL2/48iG8af4WRv+LWmAax1M= 60 talos-default-worker-2 172.20.0.6:51820 up 130072 46888

zXP0QeqRo+CBgDH1uOBiQ8tA+AKEQP9hWkqmkE/oDlc= 60 talos-default-worker-1 172.20.0.5:51820 up 130044 46556

KubeSpan peer status includes following information:

- the actual endpoint used for peer communication

- link state:

unknown: the endpoint was just changed, link state is not known yetup: there is a recent handshake from the peerdown: there is no handshake from the peer

- number of bytes sent/received over the Wireguard link with the peer

If the connection state goes down, Talos will be cycling through the available endpoints until it finds the one which works.

Peer status information is updated every 30 seconds.

KubeSpanEndpoints

A node’s WireGuard endpoints (peer addresses) can be obtained with:

$ talosctl get kubespanendpoints

ID VERSION ENDPOINT AFFILIATE ID

06D9QQOydzKrOL7oeLiqHy9OWE8KtmJzZII2A5/FLFI= 1 172.20.0.3:51820 2VfX3nu67ZtZPl57IdJrU87BMjVWkSBJiL9ulP9TCnF

THtfKtfNnzJs1nMQKs5IXqK0DFXmM//0WMY+NnaZrhU= 1 172.20.0.4:51820 b3DebkPaCRLTLLWaeRF1ejGaR0lK3m79jRJcPn0mfA6C

nVHu7l13uZyk0AaI1WuzL2/48iG8af4WRv+LWmAax1M= 1 172.20.0.6:51820 NVtfu1bT1QjhNq5xJFUZl8f8I8LOCnnpGrZfPpdN9WlB

zXP0QeqRo+CBgDH1uOBiQ8tA+AKEQP9hWkqmkE/oDlc= 1 172.20.0.5:51820 6EVq8RHIne03LeZiJ60WsJcoQOtttw1ejvTS6SOBzhUA

The endpoint ID is the base64 encoded WireGuard public key.

The observed endpoints are submitted back to the discovery service (if enabled) so that other peers can try additional endpoints to establish the connection.

18 - Managing PKI

Generating an Administrator Key Pair

In order to create a key pair, you will need the root CA.

Save the CA public key, and CA private key as ca.crt, and ca.key respectively.

Now, run the following commands to generate a certificate:

talosctl gen key --name admin

talosctl gen csr --key admin.key --ip 127.0.0.1

talosctl gen crt --ca ca --csr admin.csr --name admin

Now, base64 encode admin.crt, and admin.key:

cat admin.crt | base64

cat admin.key | base64

You can now set the crt and key fields in the talosconfig to the base64 encoded strings.

Renewing an Expired Administrator Certificate

In order to renew the certificate, you will need the root CA, and the admin private key. The base64 encoded key can be found in any one of the control plane node’s configuration file. Where it is exactly will depend on the specific version of the configuration file you are using.

Save the CA public key, CA private key, and admin private key as ca.crt, ca.key, and admin.key respectively.

Now, run the following commands to generate a certificate:

talosctl gen csr --key admin.key --ip 127.0.0.1

talosctl gen crt --ca ca --csr admin.csr --name admin

You should see admin.crt in your current directory.

Now, base64 encode admin.crt:

cat admin.crt | base64

You can now set the certificate in the talosconfig to the base64 encoded string.

19 - Resetting a Machine

From time to time, it may be beneficial to reset a Talos machine to its “original” state. Bear in mind that this is a destructive action for the given machine. Doing this means removing the machine from Kubernetes, Etcd (if applicable), and clears any data on the machine that would normally persist a reboot.

The API command for doing this is talosctl reset.

There are a couple of flags as part of this command:

Flags:

--graceful if true, attempt to cordon/drain node and leave etcd (if applicable) (default true)

--reboot if true, reboot the node after resetting instead of shutting down

The graceful flag is especially important when considering HA vs. non-HA Talos clusters.

If the machine is part of an HA cluster, a normal, graceful reset should work just fine right out of the box as long as the cluster is in a good state.

However, if this is a single node cluster being used for testing purposes, a graceful reset is not an option since Etcd cannot be “left” if there is only a single member.

In this case, reset should be used with --graceful=false to skip performing checks that would normally block the reset.

20 - Role-based access control (RBAC)

Talos v0.11 introduced initial support for role-based access control (RBAC). This guide will explain what that is and how to enable it without losing access to the cluster.

RBAC in Talos

Talos uses certificates to authorize users. The certificate subject’s organization field is used to encode user roles. There is a set of predefined roles that allow access to different API methods:

os:admingrants access to all methods;os:readergrants access to “safe” methods (for example, that includes the ability to list files, but does not include the ability to read files content);os:etcd:backupgrants access to/machine.MachineService/EtcdSnapshotmethod.

Roles in the current talosconfig can be checked with the following command:

$ talosctl config info

[...]

Roles: os:admin

[...]

RBAC is enabled by default in new clusters created with talosctl v0.11+ and disabled otherwise.

Enabling RBAC

First, both the Talos cluster and talosctl tool should be upgraded.

Then the talosctl config new command should be used to generate a new client configuration with the os:admin role.

Additional configurations and certificates for different roles can be generated by passing --roles flag:

talosctl config new --roles=os:reader reader

That command will create a new client configuration file reader with a new certificate with os:reader role.

After that, RBAC should be enabled in the machine configuration:

machine:

features:

rbac: true

21 - Storage

In Kubernetes, using storage in the right way is well-facilitated by the API.

However, unless you are running in a major public cloud, that API may not be hooked up to anything.

This frequently sends users down a rabbit hole of researching all the various options for storage backends for their platform, for Kubernetes, and for their workloads.

There are a lot of options out there, and it can be fairly bewildering.

For Talos, we try to limit the options somewhat to make the decision-making easier.

Public Cloud

If you are running on a major public cloud, use their block storage. It is easy and automatic.

Storage Clusters

Redundancy in storage is usually very important. Scaling capabilities, reliability, speed, maintenance load, and ease of use are all factors you must consider when managing your own storage.

Running a storage cluster can be a very good choice when managing your own storage, and there are two project we recommend, depending on your situation.

If you need vast amounts of storage composed of more than a dozen or so disks, just use Rook to manage Ceph. Also, if you need both mount-once and mount-many capabilities, Ceph is your answer. Ceph also bundles in an S3-compatible object store. The down side of Ceph is that there are a lot of moving parts.

Please note that most people should never use mount-many semantics. NFS is pervasive because it is old and easy, not because it is a good idea. While it may seem like a convenience at first, there are all manner of locking, performance, change control, and reliability concerns inherent in any mount-many situation, so we strongly recommend you avoid this method.

If your storage needs are small enough to not need Ceph, use Mayastor.

Rook/Ceph

Ceph is the grandfather of open source storage clusters. It is big, has a lot of pieces, and will do just about anything. It scales better than almost any other system out there, open source or proprietary, being able to easily add and remove storage over time with no downtime, safely and easily. It comes bundled with RadosGW, an S3-compatible object store. It comes with CephFS, a NFS-like clustered filesystem. And of course, it comes with RBD, a block storage system.

With the help of Rook, the vast majority of the complexity of Ceph is hidden away by a very robust operator, allowing you to control almost everything about your Ceph cluster from fairly simple Kubernetes CRDs.

So if Ceph is so great, why not use it for everything?

Ceph can be rather slow for small clusters. It relies heavily on CPUs and massive parallelisation to provide good cluster performance, so if you don’t have much of those dedicated to Ceph, it is not going to be well-optimised for you. Also, if your cluster is small, just running Ceph may eat up a significant amount of the resources you have available.

Troubleshooting Ceph can be difficult if you do not understand its architecture. There are lots of acronyms and the documentation assumes a fair level of knowledge. There are very good tools for inspection and debugging, but this is still frequently seen as a concern.

Mayastor

Mayastor is an OpenEBS project built in Rust utilising the modern NVMEoF system. (Despite the name, Mayastor does not require you to have NVME drives.) It is fast and lean but still cluster-oriented and cloud native. Unlike most of the other OpenEBS project, it is not built on the ancient iSCSI system.

Unlike Ceph, Mayastor is just a block store. It focuses on block storage and does it well. It is much less complicated to set up than Ceph, but you probably wouldn’t want to use it for more than a few dozen disks.

Mayastor is new, maybe too new. If you’re looking for something well-tested and battle-hardened, this is not it. If you’re looking for something lean, future-oriented, and simpler than Ceph, it might be a great choice.

Video Walkthrough

To see a live demo of this section, see the video below:

Prep Nodes

Either during initial cluster creation or on running worker nodes, several machine config values should be edited.

This can be done with talosctl edit machineconfig or via config patches during talosctl gen config.

- Under

/machine/sysctls, addvm.nr_hugepages: "512" - Under

/machine/kubelet/extraMounts, add/var/locallike so:

...

extraMounts:

- destination: /var/local

type: bind

source: /var/local

options:

- rbind

- rshared

- rw

...

- Either using

kubectl taint nodein a pre-existing cluster or by updating/machine/kubelet/extraArgsin machine config, addopenebs.io/engine=mayastoras a node label. If being done via machine config,extraArgsmay look like:

...

extraArgs:

node-labels: openebs.io/engine=mayastor

...

Deploy Mayastor

Using the Mayastor docs as a reference, apply all YAML files necessary. At the time of writing this looked like:

kubectl create namespace mayastor

kubectl apply -f https://raw.githubusercontent.com/openebs/Mayastor/master/deploy/moac-rbac.yaml

kubectl apply -f https://raw.githubusercontent.com/openebs/Mayastor/master/deploy/nats-deployment.yaml

kubectl apply -f https://raw.githubusercontent.com/openebs/Mayastor/master/csi/moac/crds/mayastorpool.yaml

kubectl apply -f https://raw.githubusercontent.com/openebs/Mayastor/master/deploy/csi-daemonset.yaml

kubectl apply -f https://raw.githubusercontent.com/openebs/Mayastor/master/deploy/moac-deployment.yaml

kubectl apply -f https://raw.githubusercontent.com/openebs/Mayastor/master/deploy/mayastor-daemonset.yaml

Create Pools

Each “storage” node should have a “MayastorPool” that defines the local disks to use for storage. These are later considered during scheduling and replication of data. Create the pool by issuing the following, updating as necessary:

cat <<EOF | kubectl create -f -

apiVersion: "openebs.io/v1alpha1"

kind: MayastorPool

metadata:

name: pool-on-talos-xxx

namespace: mayastor

spec:

node: talos-xxx

disks: ["/dev/sdx"]

EOF

Create StorageClass

With the pools created for each node, create a storage class that uses the nvmf protocol, updating the number of replicas as necessary:

cat <<EOF | kubectl create -f -

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: mayastor-nvmf

parameters:

repl: '1'

protocol: 'nvmf'

provisioner: io.openebs.csi-mayastor

EOF

Consume Storage

The storage can now be consumed by creating a PersistentVolumeClaim (PVC) that references the StorageClass. The PVC can then be used by a Pod or Deployment. An example of creating a PersistentVolumeClaim may look like:

cat <<EOF | kubectl create -f -

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: mayastor-volume-claim

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

storageClassName: mayastor-nvmf

EOF

NFS

NFS is an old pack animal long past its prime. However, it is supported by a wide variety of systems. You don’t want to use it unless you have to, but unfortunately, that “have to” is too frequent.

NFS is slow, has all kinds of bottlenecks involving contention, distributed locking, single points of service, and more.

The NFS client is part of the kubelet image maintained by the Talos team.

This means that the version installed in your running kubelet is the version of NFS supported by Talos.

You can reduce some of the contention problems by parceling Persistent Volumes from separate underlying directories.

Object storage

Ceph comes with an S3-compatible object store, but there are other options, as well. These can often be built on top of other storage backends. For instance, you may have your block storage running with Mayastor but assign a Pod a large Persistent Volume to serve your object store.

One of the most popular open source add-on object stores is MinIO.

Others (iSCSI)

The most common remaining systems involve iSCSI in one form or another. This includes things like the original OpenEBS, Racher’s Longhorn, and many proprietary systems. Unfortunately, Talos does not support iSCSI-based systems. iSCSI in Linux is facilitated by open-iscsi. This system was designed long before containers caught on, and it is not well suited to the task, especially when coupled with a read-only host operating system.

One day, we hope to work out a solution for facilitating iSCSI-based systems, but this is not yet available.

22 - Troubleshooting Control Plane

This guide is written as series of topics and detailed answers for each topic. It starts with basics of control plane and goes into Talos specifics.

In this guide we assume that Talos client config is available and Talos API access is available.

Kubernetes client configuration can be pulled from control plane nodes with talosctl -n <IP> kubeconfig

(this command works before Kubernetes is fully booted).

What is a control plane node?

Talos nodes which have .machine.type of init and controlplane are control plane nodes.

The only difference between init and controlplane nodes is that init node automatically

bootstraps a single-node etcd cluster on a first boot if the etcd data directory is empty.

A node with type init can be replaced with a controlplane node which is triggered to run etcd bootstrap

with talosctl --nodes <IP> bootstrap command.

Use of init type nodes is discouraged, as it might lead to split-brain scenario if one node in

existing cluster is reinstalled while config type is still init.

It is critical to make sure only one control plane runs in bootstrap mode (either with node type init or

via bootstrap API/talosctl bootstrap), as having more than node in bootstrap mode leads to split-brain

scenario (multiple etcd clusters are built instead of a single cluster).

What is special about control plane node?

Control plane nodes in Talos run etcd which provides data store for Kubernetes and Kubernetes control plane

components (kube-apiserver, kube-controller-manager and kube-scheduler).

Control plane nodes are tainted by default to prevent workloads from being scheduled to control plane nodes.

How many control plane nodes should be deployed?

With a single control plane node, cluster is not HA: if that single node experiences hardware failure, cluster control plane is broken and can’t be recovered. Single control plane node clusters are still used as test clusters and in edge deployments, but it should be noted that this setup is not HA.

Number of control plane should be odd (1, 3, 5, …), as with even number of nodes, etcd quorum doesn’t tolerate failures correctly: e.g. with 2 control plane nodes quorum is 2, so failure of any node breaks quorum, so this setup is almost equivalent to single control plane node cluster.

With three control plane nodes cluster can tolerate a failure of any single control plane node. With five control plane nodes cluster can tolerate failure of any two control plane nodes.

What is control plane endpoint?

Kubernetes requires having a control plane endpoint which points to any healthy API server running on a control plane node.

Control plane endpoint is specified as URL like https://endpoint:6443/.

At any point in time, even during failures control plane endpoint should point to a healthy API server instance.

As kube-apiserver runs with host network, control plane endpoint should point to one of the control plane node IPs: node1:6443, node2:6443, …

For single control plane node clusters, control plane endpoint might be https://IP:6443/ or https://DNS:6443/, where IP is the IP of the control plane node and DNS points to IP.

DNS form of the endpoint allows to change the IP address of the control plane if that IP changes over time.

For HA clusters, control plane can be implemented as:

- TCP L7 loadbalancer with active health checks against port 6443

- round-robin DNS with active health checks against port 6443

- BGP anycast IP with health checks

- virtual shared L2 IP

It is critical that control plane endpoint works correctly during cluster bootstrap phase, as nodes discover each other using control plane endpoint.

kubelet is not running on control plane node

Service kubelet should be running on control plane node as soon as networking is configured:

$ talosctl -n <IP> service kubelet

NODE 172.20.0.2

ID kubelet

STATE Running

HEALTH OK

EVENTS [Running]: Health check successful (2m54s ago)

[Running]: Health check failed: Get "http://127.0.0.1:10248/healthz": dial tcp 127.0.0.1:10248: connect: connection refused (3m4s ago)

[Running]: Started task kubelet (PID 2334) for container kubelet (3m6s ago)

[Preparing]: Creating service runner (3m6s ago)

[Preparing]: Running pre state (3m15s ago)

[Waiting]: Waiting for service "timed" to be "up" (3m15s ago)

[Waiting]: Waiting for service "cri" to be "up", service "timed" to be "up" (3m16s ago)

[Waiting]: Waiting for service "cri" to be "up", service "networkd" to be "up", service "timed" to be "up" (3m18s ago)

If kubelet is not running, it might be caused by wrong configuration, check kubelet logs

with talosctl logs:

$ talosctl -n <IP> logs kubelet

172.20.0.2: I0305 20:45:07.756948 2334 controller.go:101] kubelet config controller: starting controller

172.20.0.2: I0305 20:45:07.756995 2334 controller.go:267] kubelet config controller: ensuring filesystem is set up correctly

172.20.0.2: I0305 20:45:07.757000 2334 fsstore.go:59] kubelet config controller: initializing config checkpoints directory "/etc/kubernetes/kubelet/store"

etcd is not running on bootstrap node

etcd should be running on bootstrap node immediately (bootstrap node is either init node or controlplane node

after talosctl bootstrap command was issued).

When node boots for the first time, etcd data directory /var/lib/etcd directory is empty and Talos launches etcd in a mode to build the initial cluster of a single node.

At this time /var/lib/etcd directory becomes non-empty and etcd runs as usual.

If etcd is not running, check service etcd state:

$ talosctl -n <IP> service etcd

NODE 172.20.0.2

ID etcd

STATE Running

HEALTH OK

EVENTS [Running]: Health check successful (3m21s ago)

[Running]: Started task etcd (PID 2343) for container etcd (3m26s ago)

[Preparing]: Creating service runner (3m26s ago)

[Preparing]: Running pre state (3m26s ago)

[Waiting]: Waiting for service "cri" to be "up", service "networkd" to be "up", service "timed" to be "up" (3m26s ago)

If service is stuck in Preparing state for bootstrap node, it might be related to slow network - at this stage

Talos pulls etcd image from the container registry.

If etcd service is crashing and restarting, check service logs with talosctl -n <IP> logs etcd.

Most common reasons for crashes are:

- wrong arguments passed via

extraArgsin the configuration; - booting Talos on non-empty disk with previous Talos installation,

/var/lib/etcdcontains data from old cluster.

etcd is not running on non-bootstrap control plane node

Service etcd on non-bootstrap control plane node waits for Kubernetes to boot successfully on bootstrap node to find

other peers to build a cluster.

As soon as bootstrap node boots Kubernetes control plane components, and kubectl get endpoints returns IP of bootstrap control plane node, other control plane nodes will start joining the cluster followed by Kubernetes control plane components on each control plane node.

Kubernetes static pod definitions are not generated

Talos should write down static pod definitions for the Kubernetes control plane:

$ talosctl -n <IP> ls /etc/kubernetes/manifests

NODE NAME

172.20.0.2 .

172.20.0.2 talos-kube-apiserver.yaml

172.20.0.2 talos-kube-controller-manager.yaml

172.20.0.2 talos-kube-scheduler.yaml

If static pod definitions are not rendered, check etcd and kubelet service health (see above),

and controller runtime logs (talosctl logs controller-runtime).

Talos prints error an error on the server ("") has prevented the request from succeeding

This is expected during initial cluster bootstrap and sometimes after a reboot:

[ 70.093289] [talos] task labelNodeAsMaster (1/1): starting

[ 80.094038] [talos] retrying error: an error on the server ("") has prevented the request from succeeding (get nodes talos-default-master-1)

Initially kube-apiserver component is not running yet, and it takes some time before it becomes fully up

during bootstrap (image should be pulled from the Internet, etc.)

Once control plane endpoint is up Talos should proceed.

If Talos doesn’t proceed further, it might be a configuration issue.

In any case, status of control plane components can be checked with talosctl containers -k:

$ talosctl -n <IP> containers --kubernetes

NODE NAMESPACE ID IMAGE PID STATUS

172.20.0.2 k8s.io kube-system/kube-apiserver-talos-default-master-1 k8s.gcr.io/pause:3.2 2539 SANDBOX_READY

172.20.0.2 k8s.io └─ kube-system/kube-apiserver-talos-default-master-1:kube-apiserver k8s.gcr.io/kube-apiserver:v1.20.4 2572 CONTAINER_RUNNING

If kube-apiserver shows as CONTAINER_EXITED, it might have exited due to configuration error.

Logs can be checked with taloctl logs --kubernetes (or with -k as a shorthand):

$ talosctl -n <IP> logs -k kube-system/kube-apiserver-talos-default-master-1:kube-apiserver

172.20.0.2: 2021-03-05T20:46:13.133902064Z stderr F 2021/03/05 20:46:13 Running command:

172.20.0.2: 2021-03-05T20:46:13.133933824Z stderr F Command env: (log-file=, also-stdout=false, redirect-stderr=true)

172.20.0.2: 2021-03-05T20:46:13.133938524Z stderr F Run from directory:

172.20.0.2: 2021-03-05T20:46:13.13394154Z stderr F Executable path: /usr/local/bin/kube-apiserver

...

Talos prints error nodes "talos-default-master-1" not found

This error means that kube-apiserver is up, and control plane endpoint is healthy, but kubelet hasn’t got

its client certificate yet and wasn’t able to register itself.

For the kubelet to get its client certificate, following conditions should apply:

- control plane endpoint is healthy (

kube-apiserveris running) - bootstrap manifests got successfully deployed (for CSR auto-approval)

kube-controller-manageris running

CSR state can be checked with kubectl get csr:

$ kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

csr-jcn9j 14m kubernetes.io/kube-apiserver-client-kubelet system:bootstrap:q9pyzr Approved,Issued

csr-p6b9q 14m kubernetes.io/kube-apiserver-client-kubelet system:bootstrap:q9pyzr Approved,Issued

csr-sw6rm 14m kubernetes.io/kube-apiserver-client-kubelet system:bootstrap:q9pyzr Approved,Issued

csr-vlghg 14m kubernetes.io/kube-apiserver-client-kubelet system:bootstrap:q9pyzr Approved,Issued

Talos prints error node not ready

Node in Kubernetes is marked as Ready once CNI is up.