This is the multi-page printable view of this section. Click here to print.

Virtualized Platforms

1 - Hyper-V

Talos is known to work on Hyper-V; however, it is currently undocumented.

2 - KVM

Talos is known to work on KVM; however, it is currently undocumented.

3 - Proxmox

In this guide we will create a Kubernetes cluster using Proxmox.

Video Walkthrough

To see a live demo of this writeup, visit Youtube here:

Installation

How to Get Proxmox

It is assumed that you have already installed Proxmox onto the server you wish to create Talos VMs on. Visit the Proxmox downloads page if necessary.

Install talosctl

You can download talosctl via

github.com/talos-systems/talos/releases

curl https://github.com/siderolabs/talos/releases/download/<version>/talosctl-<platform>-<arch> -L -o talosctl

For example version v0.13.0 for linux platform:

curl https://github.com/talos-systems/talos/releases/latest/download/talosctl-linux-amd64 -L -o talosctl

sudo cp talosctl /usr/local/bin

sudo chmod +x /usr/local/bin/talosctl

Download ISO Image

In order to install Talos in Proxmox, you will need the ISO image from the Talos release page.

You can download talos-amd64.iso via

github.com/talos-systems/talos/releases

mkdir -p _out/

curl https://github.com/siderolabs/talos/releases/download/<version>/talos-<arch>.iso -L -o _out/talos-<arch>.iso

For example version v0.13.0 for linux platform:

mkdir -p _out/

curl https://github.com/talos-systems/talos/releases/latest/download/talos-amd64.iso -L -o _out/talos-amd64.iso

Upload ISO

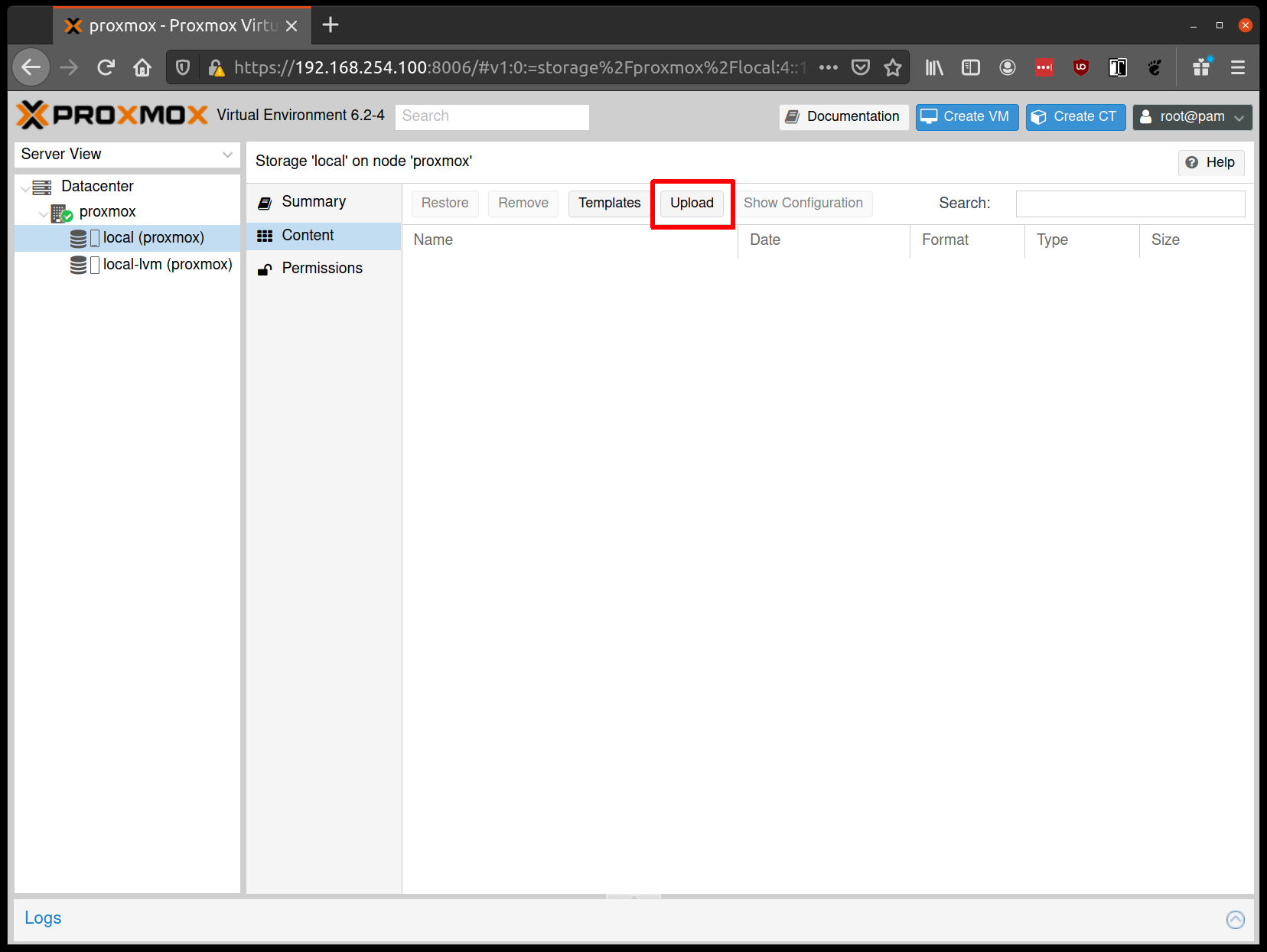

From the Proxmox UI, select the “local” storage and enter the “Content” section. Click the “Upload” button:

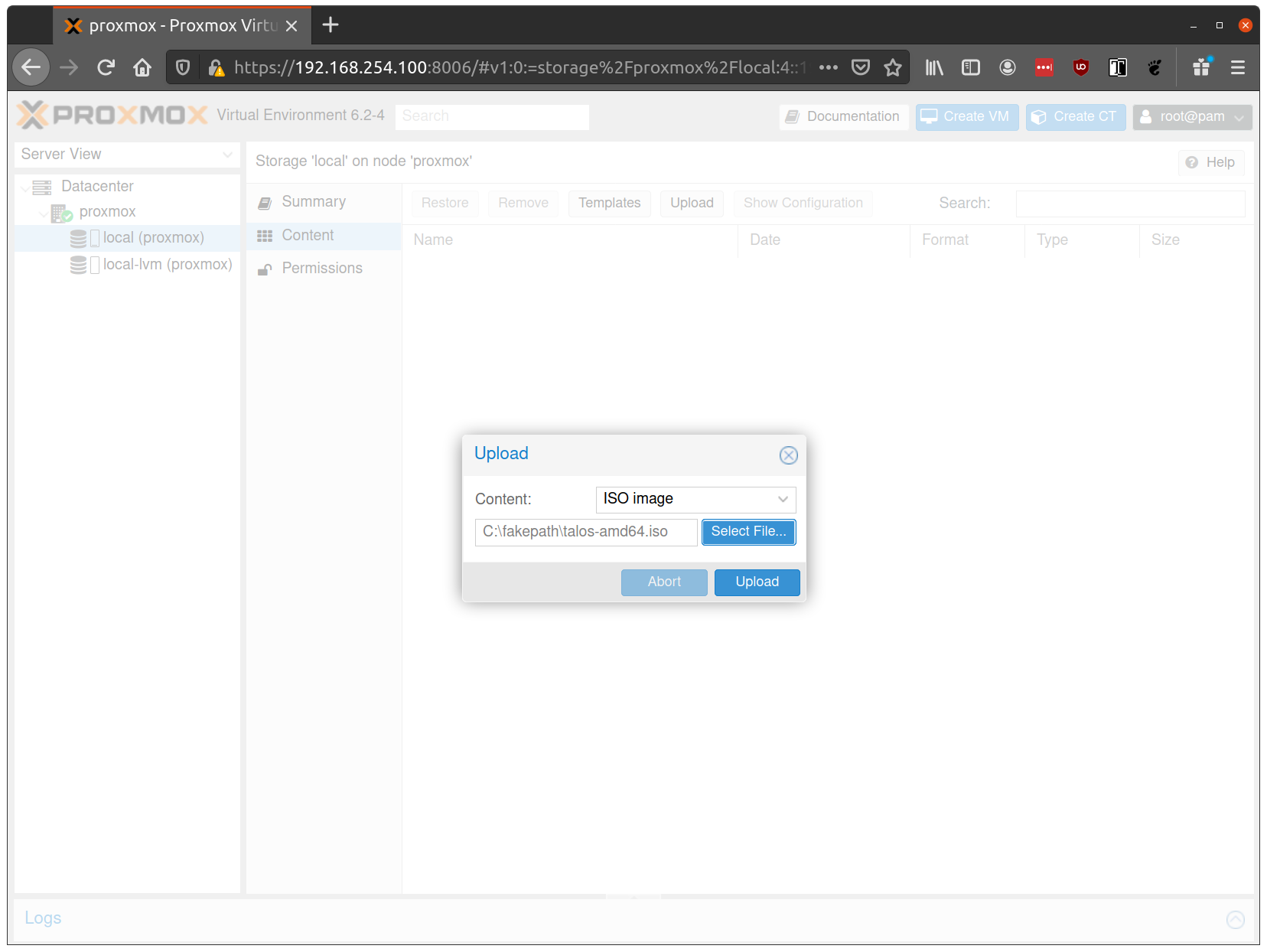

Select the ISO you downloaded previously, then hit “Upload”

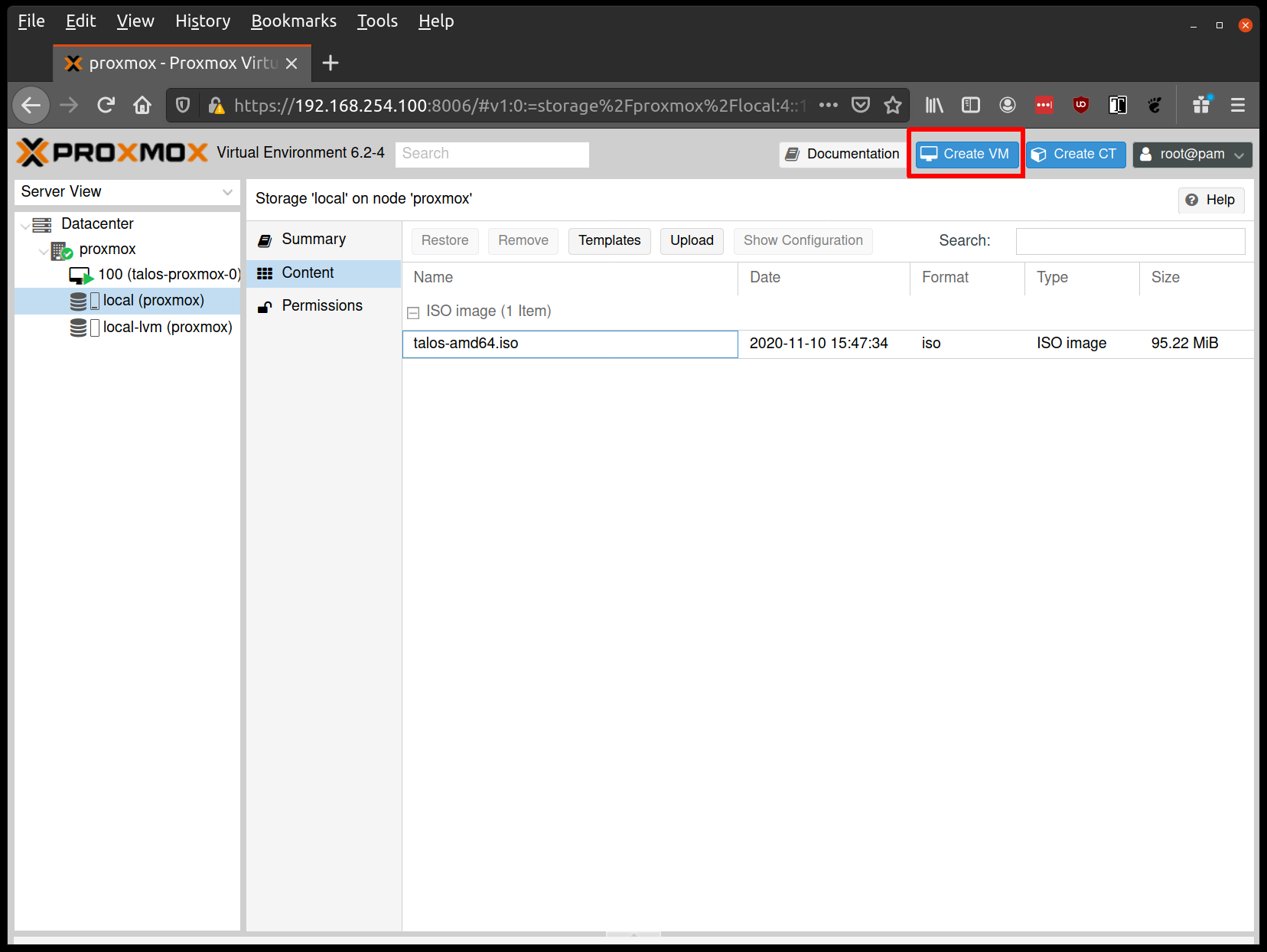

Create VMs

Start by creating a new VM by clicking the “Create VM” button in the Proxmox UI:

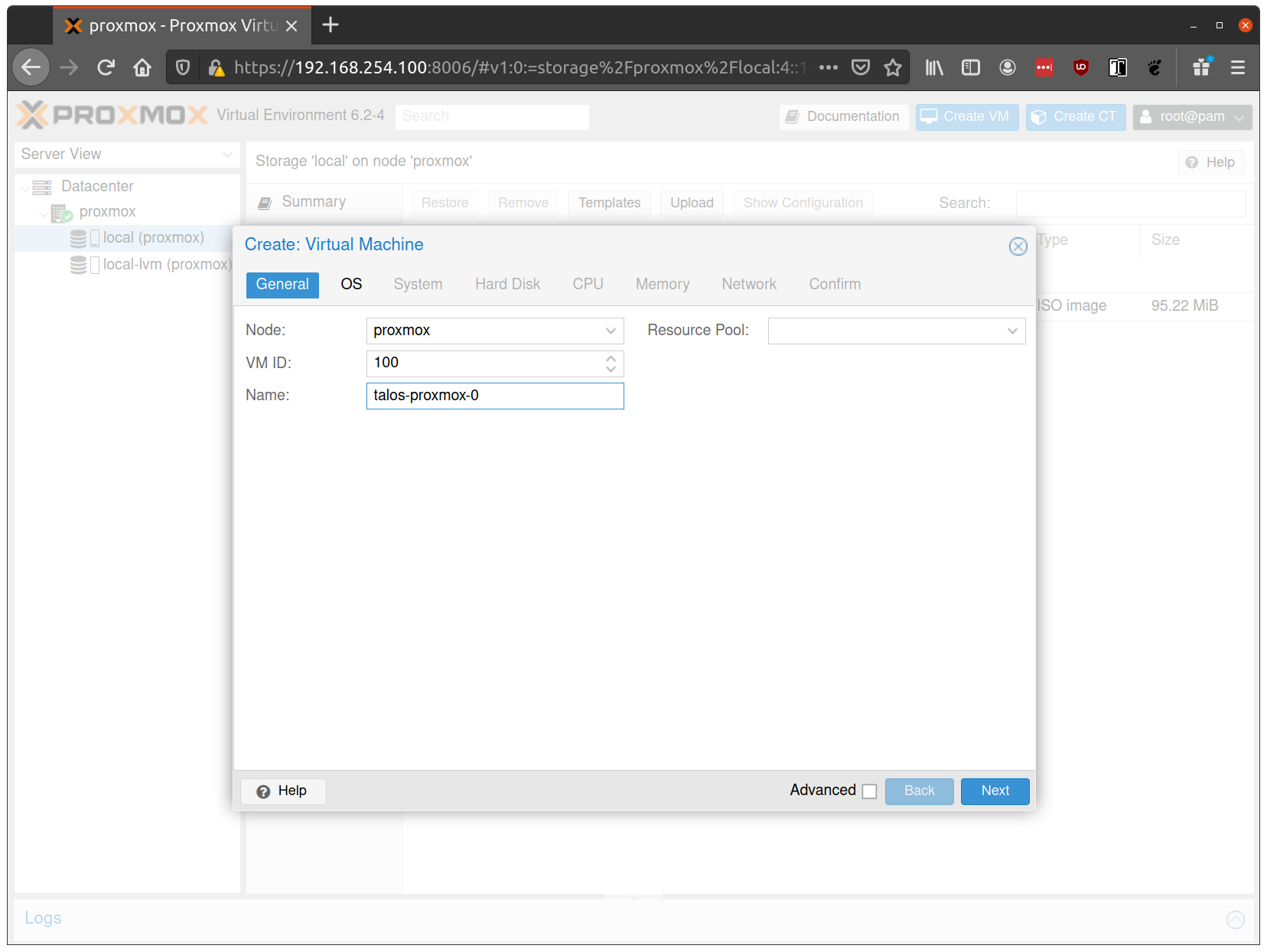

Fill out a name for the new VM:

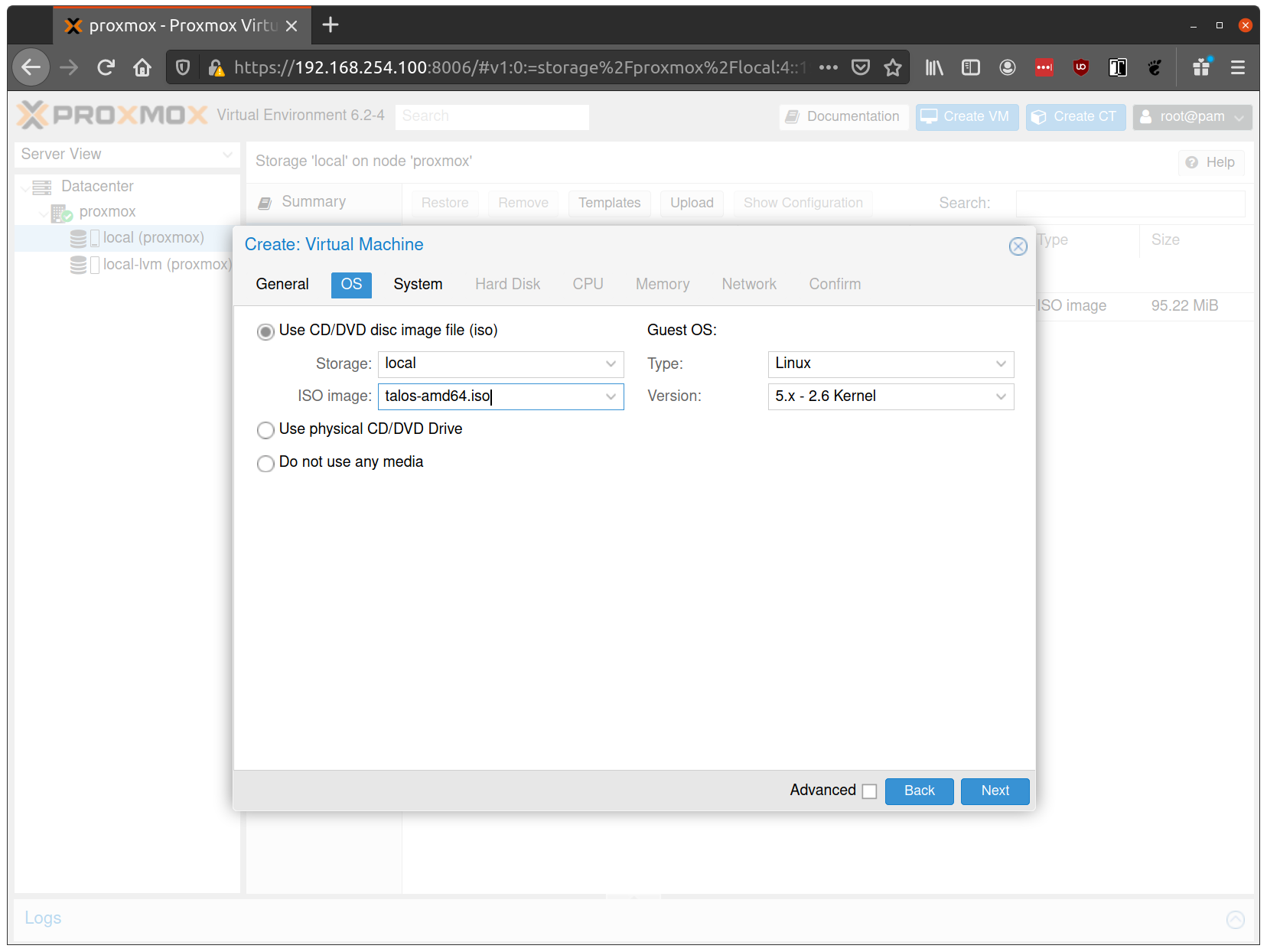

In the OS tab, select the ISO we uploaded earlier:

Keep the defaults set in the “System” tab.

Keep the defaults in the “Hard Disk” tab as well, only changing the size if desired.

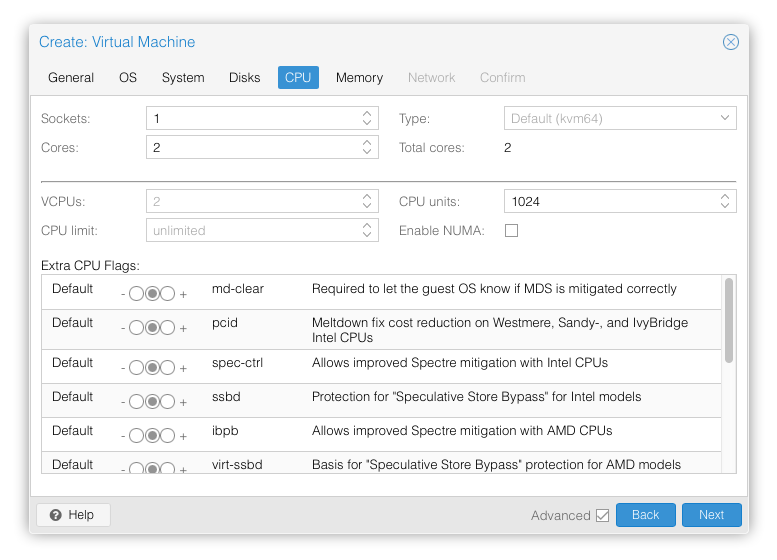

In the “CPU” section, give at least 2 cores to the VM:

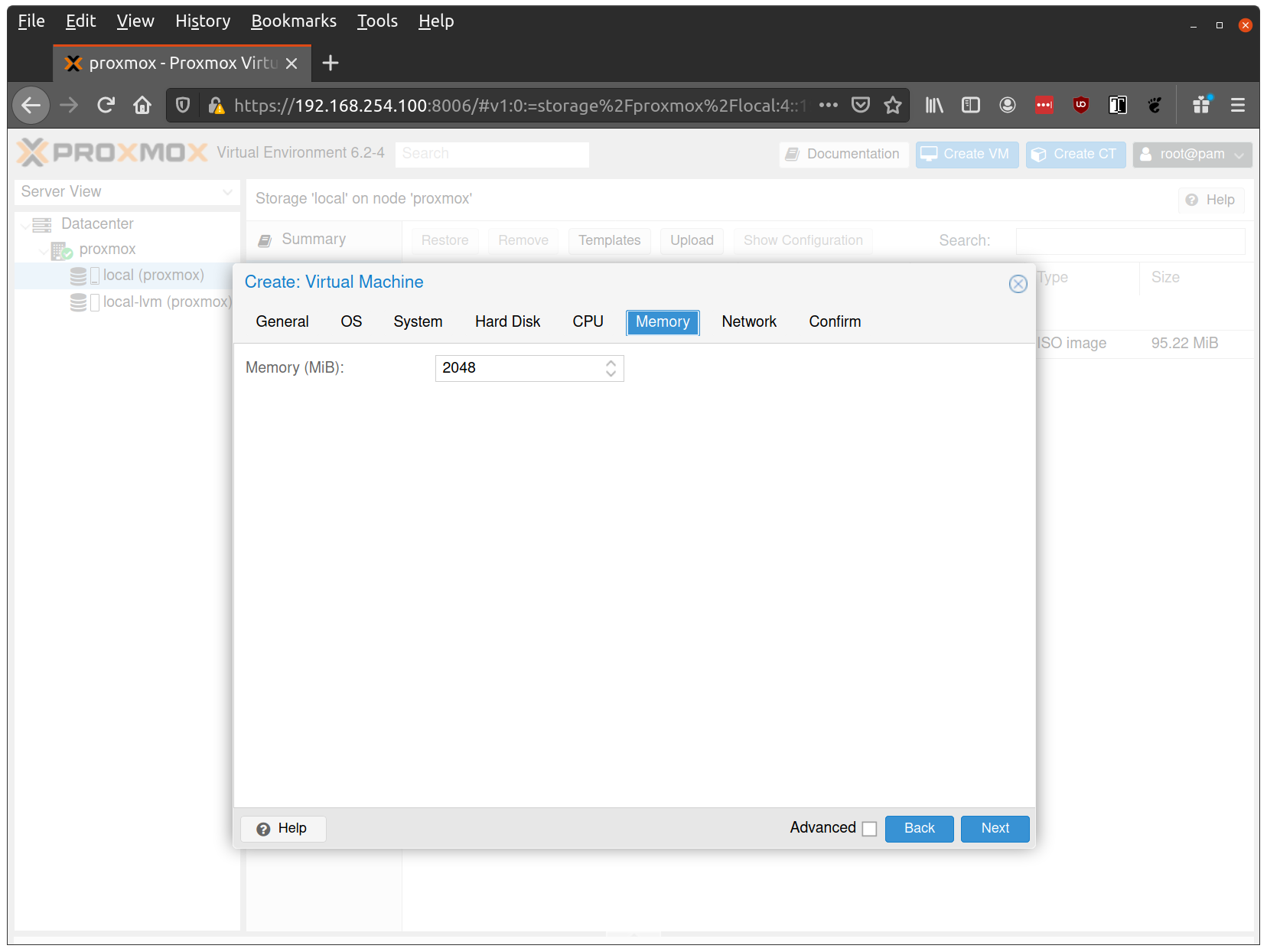

Verify that the RAM is set to at least 2GB:

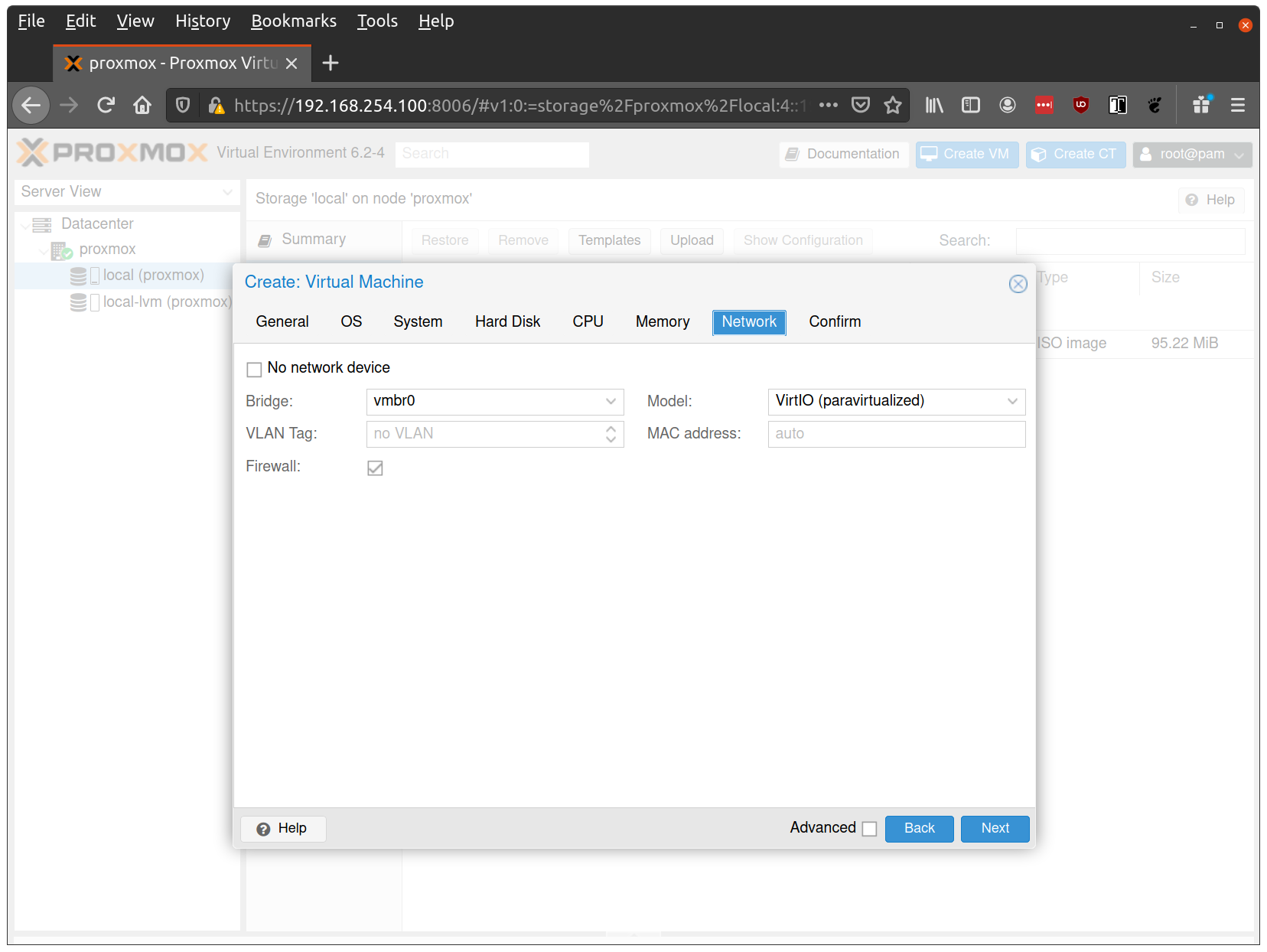

Keep the default values for networking, verifying that the VM is set to come up on the bridge interface:

Finish creating the VM by clicking through the “Confirm” tab and then “Finish”.

Repeat this process for a second VM to use as a worker node. You can also repeat this for additional nodes desired.

Start Control Plane Node

Once the VMs have been created and updated, start the VM that will be the first control plane node. This VM will boot the ISO image specified earlier and enter “maintenance mode”.

With DHCP server

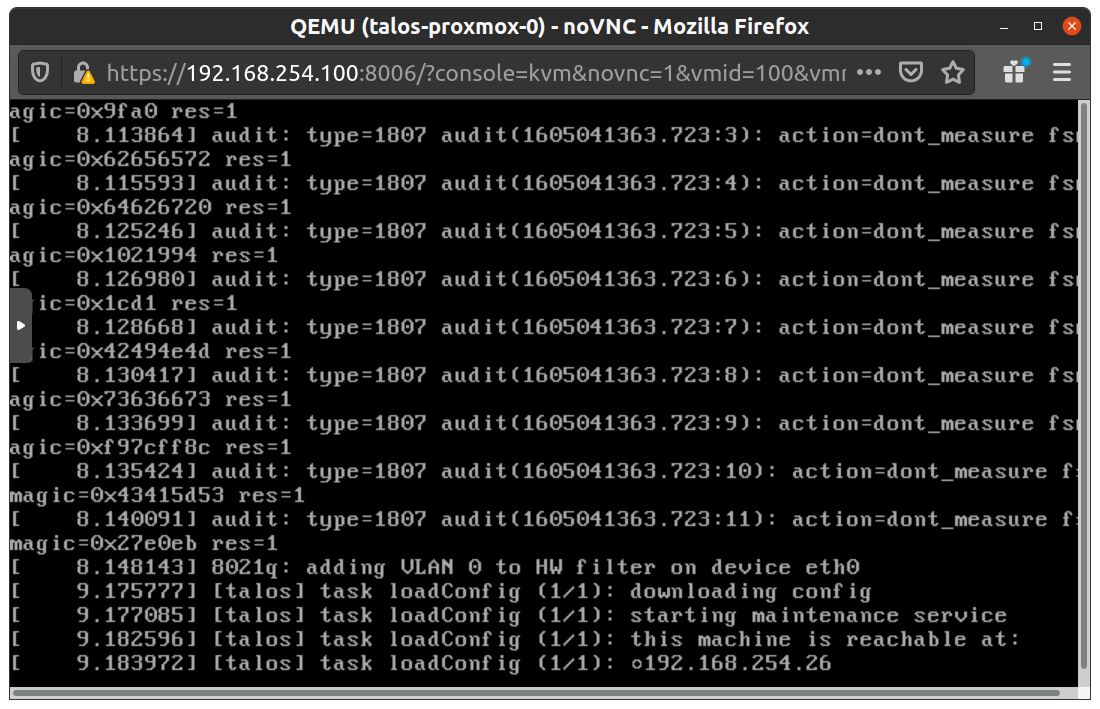

Once the machine has entered maintenance mode, there will be a console log that details the IP address that the node received.

Take note of this IP address, which will be referred to as $CONTROL_PLANE_IP for the rest of this guide.

If you wish to export this IP as a bash variable, simply issue a command like export CONTROL_PLANE_IP=1.2.3.4.

Without DHCP server

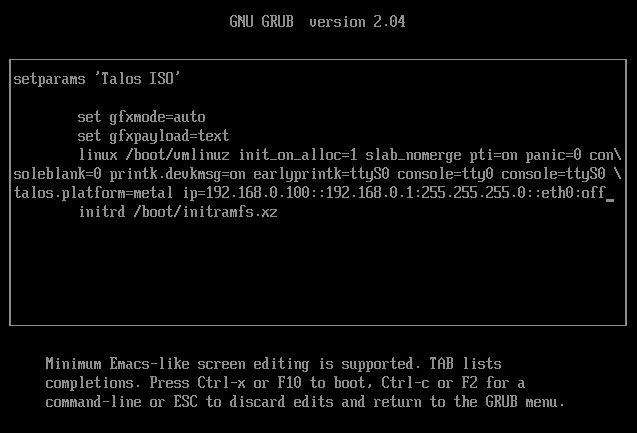

To apply the machine configurations in maintenance mode, VM has to have IP on the network. So you can set it on boot time manually.

Press e on the boot time.

And set the IP parameters for the VM.

Format is:

ip=<client-ip>:<srv-ip>:<gw-ip>:<netmask>:<host>:<device>:<autoconf>

For example $CONTROL_PLANE_IP will be 192.168.0.100 and gateway 192.168.0.1

linux /boot/vmlinuz init_on_alloc=1 slab_nomerge pti=on panic=0 consoleblank=0 printk.devkmsg=on earlyprintk=ttyS0 console=tty0 console=ttyS0 talos.platform=metal ip=192.168.0.100::192.168.0.1:255.255.255.0::eth0:off

Then press Ctrl-x or F10

Generate Machine Configurations

With the IP address above, you can now generate the machine configurations to use for installing Talos and Kubernetes. Issue the following command, updating the output directory, cluster name, and control plane IP as you see fit:

talosctl gen config talos-vbox-cluster https://$CONTROL_PLANE_IP:6443 --output-dir _out

This will create several files in the _out directory: controlplane.yaml, worker.yaml, and talosconfig.

Create Control Plane Node

Using the controlplane.yaml generated above, you can now apply this config using talosctl.

Issue:

talosctl apply-config --insecure --nodes $CONTROL_PLANE_IP --file _out/controlplane.yaml

You should now see some action in the Proxmox console for this VM. Talos will be installed to disk, the VM will reboot, and then Talos will configure the Kubernetes control plane on this VM.

Note: This process can be repeated multiple times to create an HA control plane.

Create Worker Node

Create at least a single worker node using a process similar to the control plane creation above.

Start the worker node VM and wait for it to enter “maintenance mode”.

Take note of the worker node’s IP address, which will be referred to as $WORKER_IP

Issue:

talosctl apply-config --insecure --nodes $WORKER_IP --file _out/worker.yaml

Note: This process can be repeated multiple times to add additional workers.

Using the Cluster

Once the cluster is available, you can make use of talosctl and kubectl to interact with the cluster.

For example, to view current running containers, run talosctl containers for a list of containers in the system namespace, or talosctl containers -k for the k8s.io namespace.

To view the logs of a container, use talosctl logs <container> or talosctl logs -k <container>.

First, configure talosctl to talk to your control plane node by issuing the following, updating paths and IPs as necessary:

export TALOSCONFIG="_out/talosconfig"

talosctl config endpoint $CONTROL_PLANE_IP

talosctl config node $CONTROL_PLANE_IP

Bootstrap Etcd

Set the endpoints and nodes:

talosctl --talosconfig talosconfig config endpoint <control plane 1 IP>

talosctl --talosconfig talosconfig config node <control plane 1 IP>

Bootstrap etcd:

talosctl --talosconfig talosconfig bootstrap

Retrieve the kubeconfig

At this point we can retrieve the admin kubeconfig by running:

talosctl --talosconfig talosconfig kubeconfig .

Cleaning Up

To cleanup, simply stop and delete the virtual machines from the Proxmox UI.

4 - VMware

Creating a Cluster via the govc CLI

In this guide we will create an HA Kubernetes cluster with 3 worker nodes.

We will use the govc cli which can be downloaded here.

Prerequisites

Prior to starting, it is important to have the following infrastructure in place and available:

- DHCP server

- Load Balancer or DNS address for cluster endpoint

- If using a load balancer, the most common setup is to balance

tcp/443across the control plane nodestcp/6443 - If using a DNS address, the A record should return back the addresses of the control plane nodes

- If using a load balancer, the most common setup is to balance

Create the Machine Configuration Files

Generating Base Configurations

Using the DNS name or name of the loadbalancer used in the prereq steps, generate the base configuration files for the Talos machines:

$ talosctl gen config talos-k8s-vmware-tutorial https://<load balancer IP or DNS>:<port>

created controlplane.yaml

created worker.yaml

created talosconfig

$ talosctl gen config talos-k8s-vmware-tutorial https://<DNS name>:6443

created controlplane.yaml

created worker.yaml

created talosconfig

At this point, you can modify the generated configs to your liking.

Optionally, you can specify --config-patch with RFC6902 jsonpatch which will be applied during the config generation.

Validate the Configuration Files

$ talosctl validate --config controlplane.yaml --mode cloud

controlplane.yaml is valid for cloud mode

$ talosctl validate --config worker.yaml --mode cloud

worker.yaml is valid for cloud mode

Set Environment Variables

govc makes use of the following environment variables

export GOVC_URL=<vCenter url>

export GOVC_USERNAME=<vCenter username>

export GOVC_PASSWORD=<vCenter password>

Note: If your vCenter installation makes use of self signed certificates, you’ll want to export

GOVC_INSECURE=true.

There are some additional variables that you may need to set:

export GOVC_DATACENTER=<vCenter datacenter>

export GOVC_RESOURCE_POOL=<vCenter resource pool>

export GOVC_DATASTORE=<vCenter datastore>

export GOVC_NETWORK=<vCenter network>

Download the OVA

A talos.ova asset is published with each release.

We will refer to the version of the release as $TALOS_VERSION below.

It can be easily exported with export TALOS_VERSION="v0.3.0-alpha.10" or similar.

curl -LO https://github.com/siderolabs/talos/releases/download/$TALOS_VERSION/talos.ova

Import the OVA into vCenter

We’ll need to repeat this step for each Talos node we want to create. In a typical HA setup, we’ll have 3 control plane nodes and N workers. In the following example, we’ll setup a HA control plane with two worker nodes.

govc import.ova -name talos-$TALOS_VERSION /path/to/downloaded/talos.ova

Create the Bootstrap Node

We’ll clone the OVA to create the bootstrap node (our first control plane node).

govc vm.clone -on=false -vm talos-$TALOS_VERSION control-plane-1

Talos makes use of the guestinfo facility of VMware to provide the machine/cluster configuration.

This can be set using the govc vm.change command.

To facilitate persistent storage using the vSphere cloud provider integration with Kubernetes, disk.enableUUID=1 is used.

govc vm.change \

-e "guestinfo.talos.config=$(cat controlplane.yaml | base64)" \

-e "disk.enableUUID=1" \

-vm /ha-datacenter/vm/control-plane-1

Update Hardware Resources for the Bootstrap Node

-cis used to configure the number of cpus-mis used to configure the amount of memory (in MB)

govc vm.change \

-c 2 \

-m 4096 \

-vm /ha-datacenter/vm/control-plane-1

The following can be used to adjust the ephemeral disk size.

govc vm.disk.change -vm control-plane-1 -disk.name disk-1000-0 -size 10G

govc vm.power -on control-plane-1

Create the Remaining Control Plane Nodes

govc vm.clone -on=false -vm talos-$TALOS_VERSION control-plane-2

govc vm.change \

-e "guestinfo.talos.config=$(base64 controlplane.yaml)" \

-e "disk.enableUUID=1" \

-vm /ha-datacenter/vm/control-plane-2

govc vm.clone -on=false -vm talos-$TALOS_VERSION control-plane-3

govc vm.change \

-e "guestinfo.talos.config=$(base64 controlplane.yaml)" \

-e "disk.enableUUID=1" \

-vm /ha-datacenter/vm/control-plane-3

govc vm.change \

-c 2 \

-m 4096 \

-vm /ha-datacenter/vm/control-plane-2

govc vm.change \

-c 2 \

-m 4096 \

-vm /ha-datacenter/vm/control-plane-3

govc vm.disk.change -vm control-plane-2 -disk.name disk-1000-0 -size 10G

govc vm.disk.change -vm control-plane-3 -disk.name disk-1000-0 -size 10G

govc vm.power -on control-plane-2

govc vm.power -on control-plane-3

Update Settings for the Worker Nodes

govc vm.clone -on=false -vm talos-$TALOS_VERSION worker-1

govc vm.change \

-e "guestinfo.talos.config=$(base64 worker.yaml)" \

-e "disk.enableUUID=1" \

-vm /ha-datacenter/vm/worker-1

govc vm.clone -on=false -vm talos-$TALOS_VERSION worker-2

govc vm.change \

-e "guestinfo.talos.config=$(base64 worker.yaml)" \

-e "disk.enableUUID=1" \

-vm /ha-datacenter/vm/worker-2

govc vm.change \

-c 4 \

-m 8192 \

-vm /ha-datacenter/vm/worker-1

govc vm.change \

-c 4 \

-m 8192 \

-vm /ha-datacenter/vm/worker-2

govc vm.disk.change -vm worker-1 -disk.name disk-1000-0 -size 50G

govc vm.disk.change -vm worker-2 -disk.name disk-1000-0 -size 50G

govc vm.power -on worker-1

govc vm.power -on worker-2

Retrieve the kubeconfig

At this point we can retrieve the admin kubeconfig by running:

talosctl --talosconfig talosconfig config endpoint <control plane 1 IP>

talosctl --talosconfig talosconfig config node <control plane 1 IP>

talosctl --talosconfig talosconfig kubeconfig .

5 - Xen

Talos is known to work on Xen; however, it is currently undocumented.